Before GPS, how did aircraft navigate?

One important technique was celestial navigation: navigating from the positions of the stars, planets,

or the sun.

While celestial navigation is accurate, cannot be jammed, and doesn't require any broadcast infrastructure,

it is a difficult and time-consuming process to perform manually.

In the early 1960s, an automated system was developed for the B-52 bomber to automatically track

stars and compute navigation information.

Digital computers weren't suitable at the time, so the star tracking system performed trigonometric

calculations with an

electromechanical analog computer called the Angle Computer.1

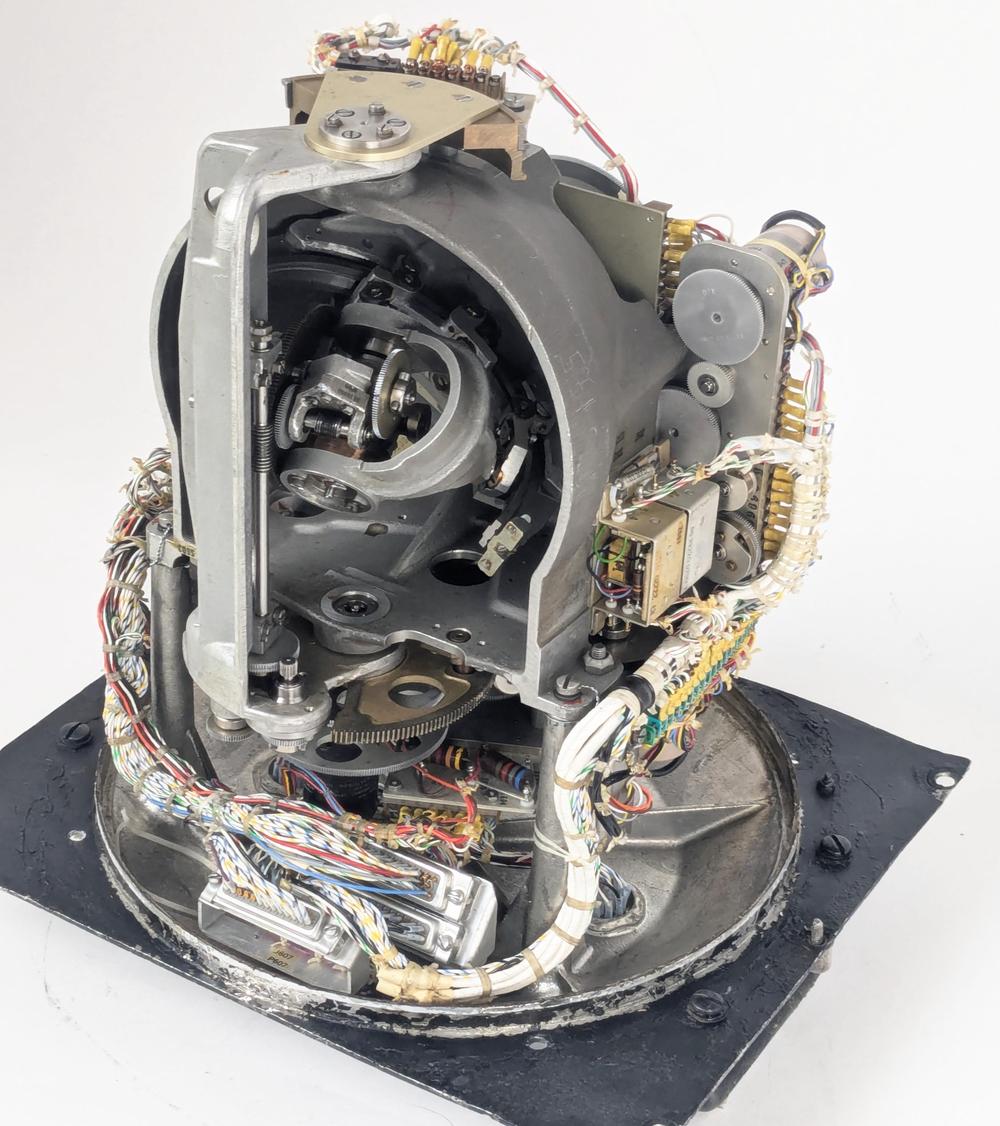

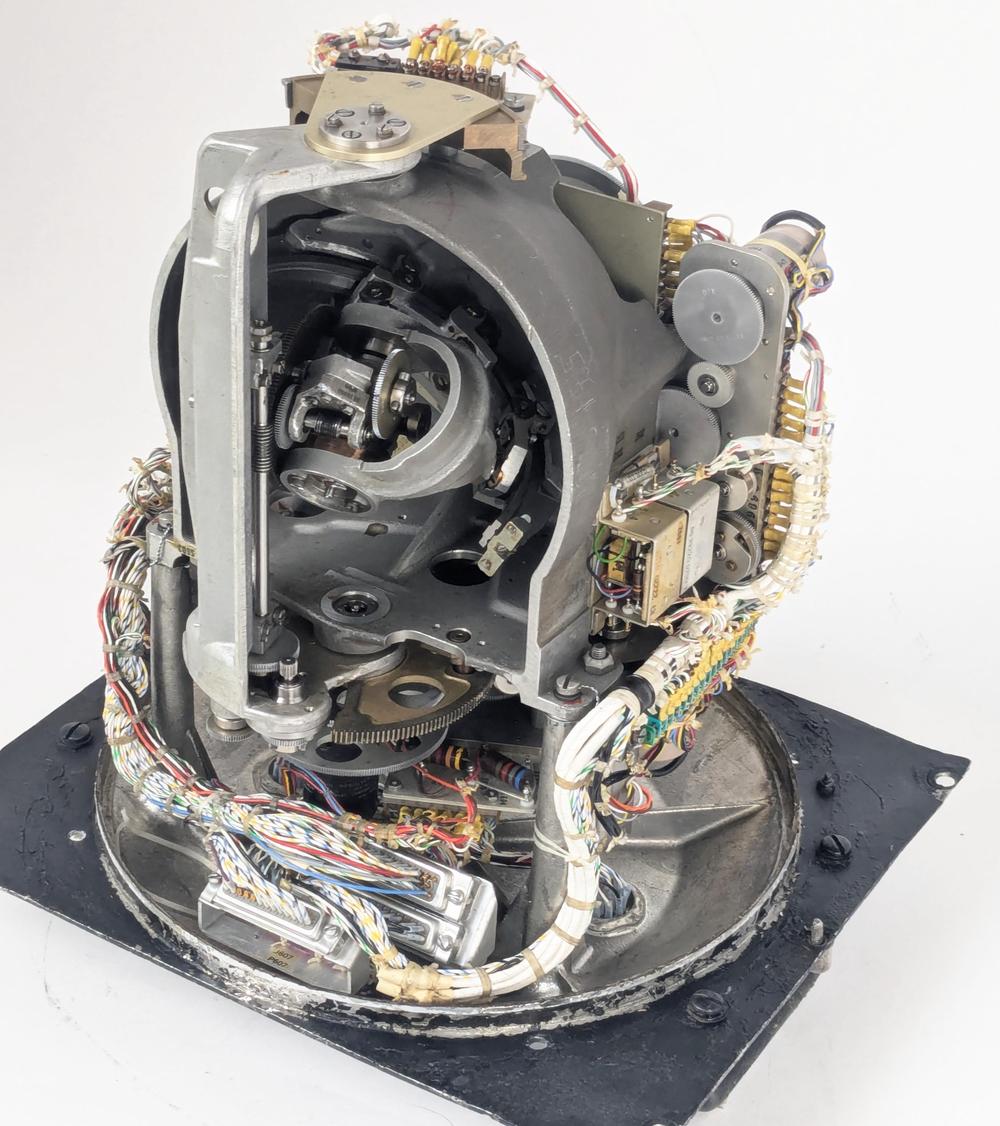

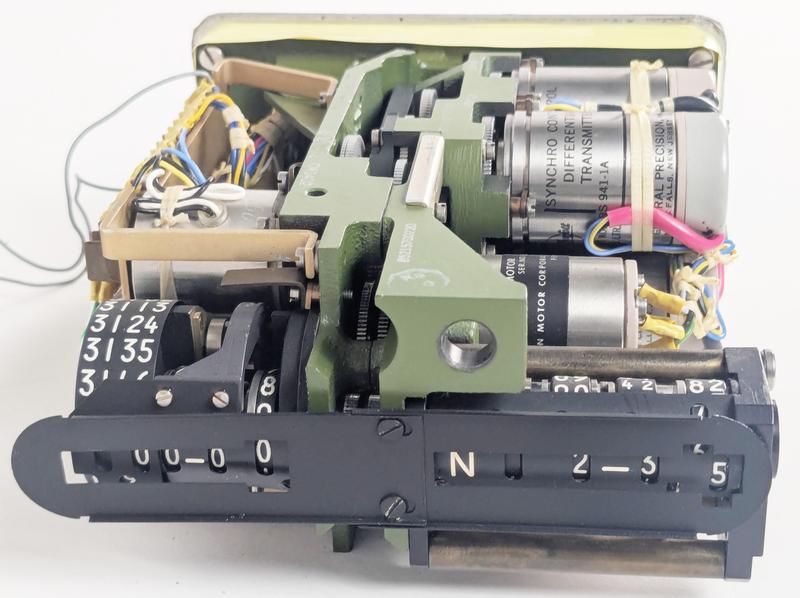

The Angle Computer contains complex electromechanical systems. Click this image (or any other) for a larger image.

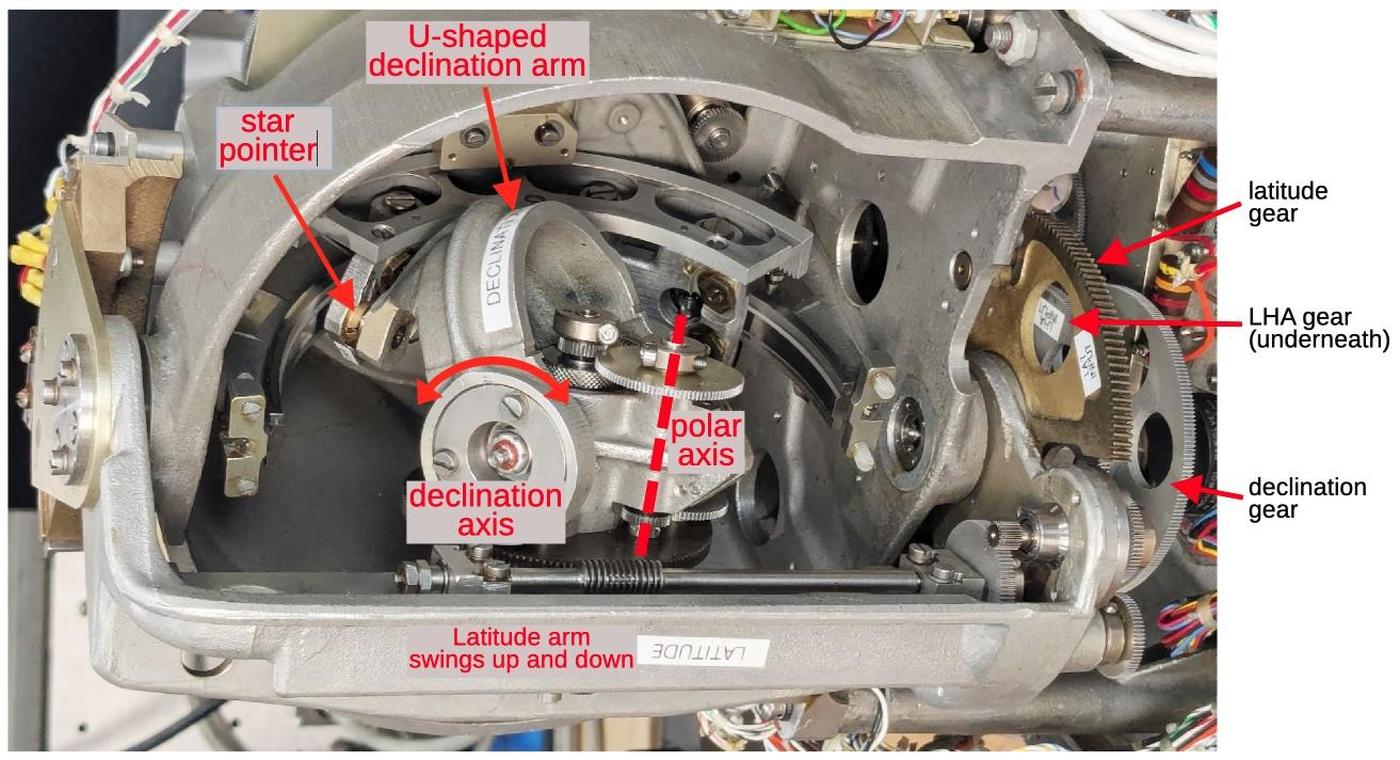

The photo above shows the mechanism inside the Angle Computer.2

Although it may look like a gyroscope or IMU (Inertial Measurement Unit), it is completely different

and nothing is spinning.

The Angle Computer

physically models the "celestial sphere", with a complicated mechanism inside that moves a

pointer that represents the position of a star.

The corresponding angles (the azimuth and altitude) are read out electrically through devices

called synchros, providing information to the navigation system through bundles of wires.

In this article, I'll give an overview of how celestial navigation works and explain how the

Angle Computer performs its calculations.

The Astro Compass system

The Angle Computer is one piece of the Astro Compass, a system that

locked onto a star and produced a highly accurate heading (i.e., compass direction), accurate to a tenth of a degree.

While the heading is the main output from the Astro Compass, the navigator can also use it to determine position, using the "lines of position" technique described later.

The Astro Tracker was mounted on top of the aircraft with the plastic bubble sticking out.

The Astro Compass navigation system was built around the "Astro Tracker" (above), the optical system that tracks a star.

The Astro Tracker was mounted on the aircraft with the 4-inch glass dome protruding from the top of the fuselage.

This unit contains a tracking telescope, which used a photomultiplier tube to detect the light from a

star.

A gyroscope and a complicated system of motors provided a "stable platform", keeping the telescope precisely vertical

even as the aircraft tilted and moved.

A prism rotated and tilted to aim the telescope at a particular star.3

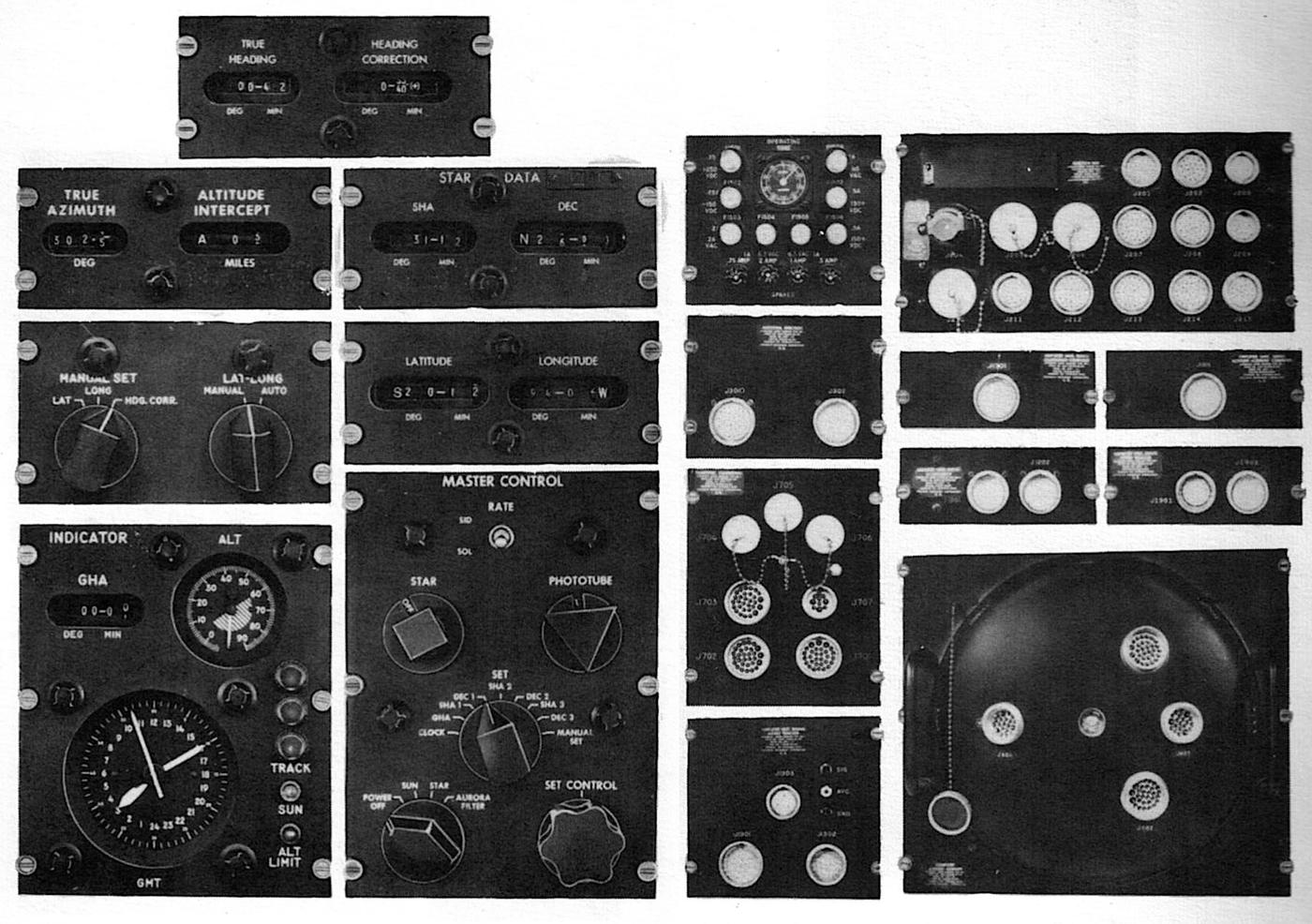

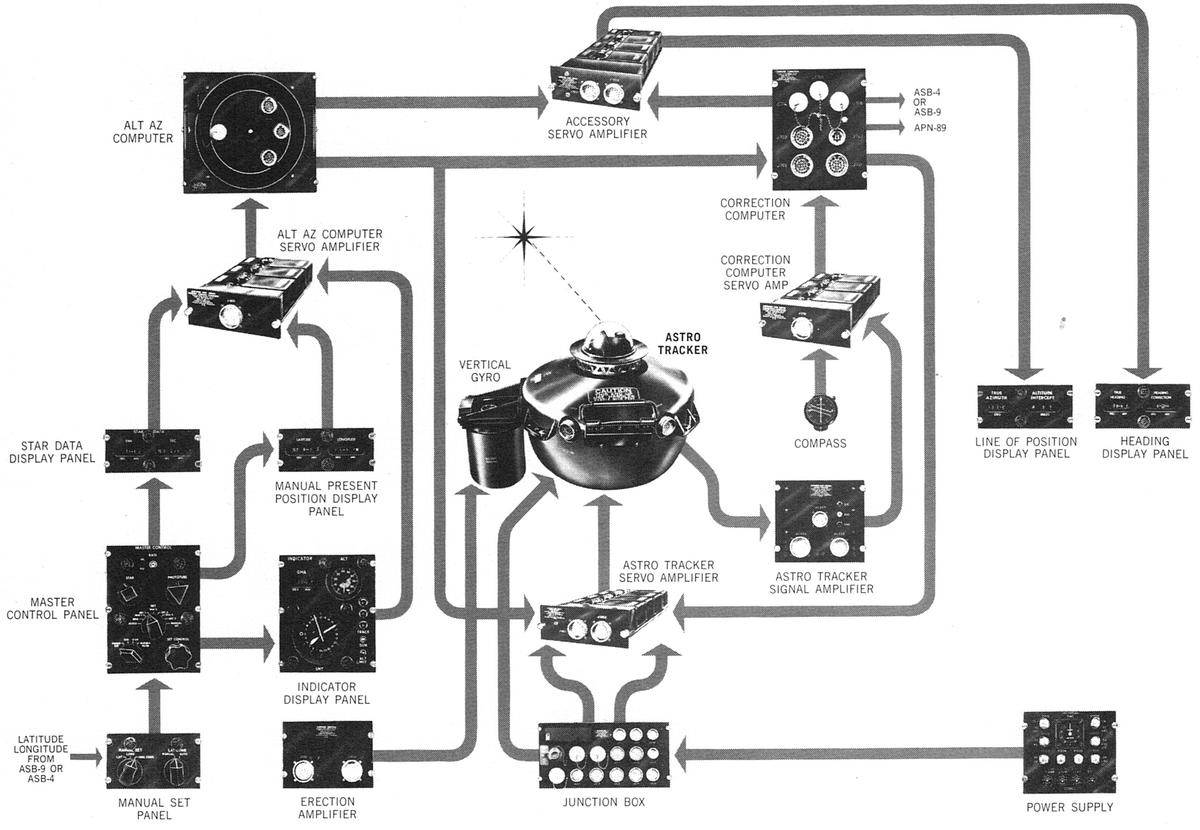

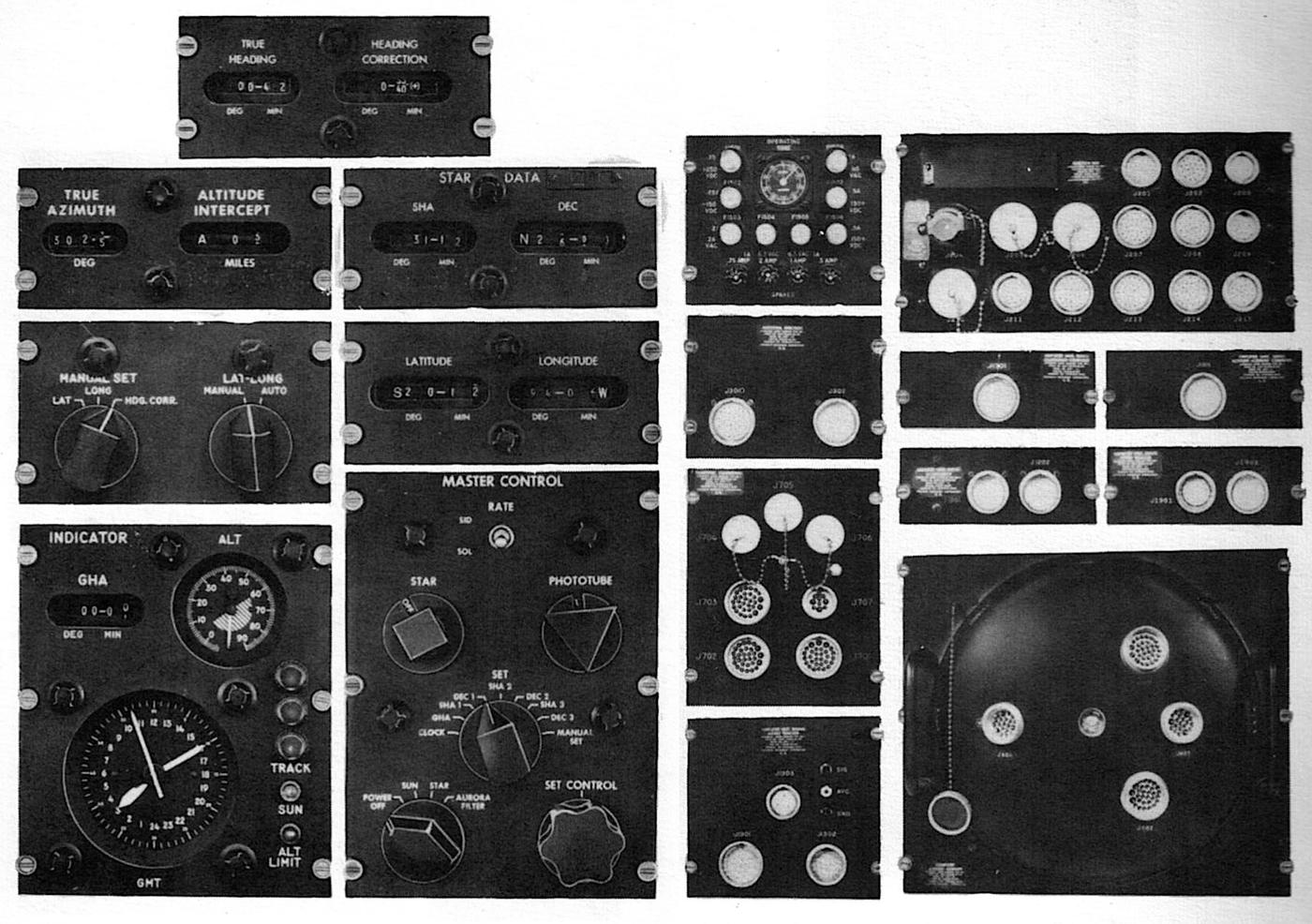

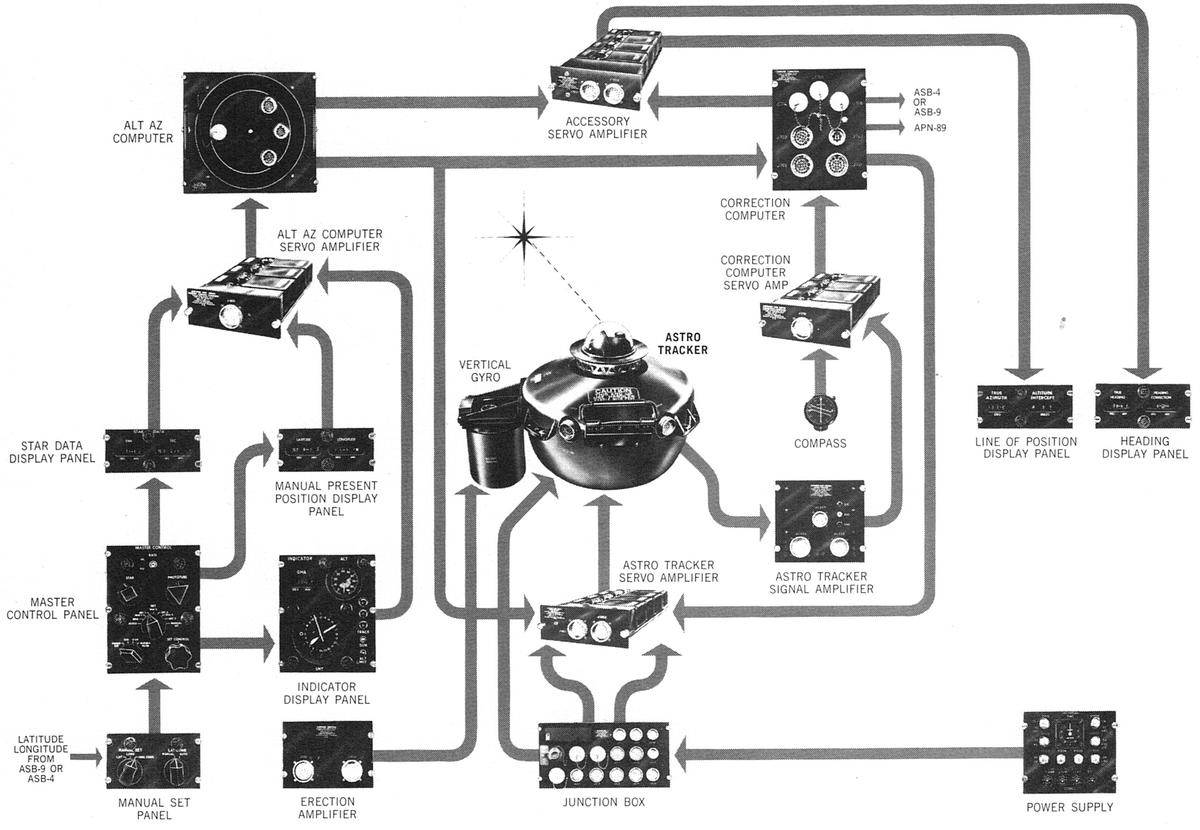

The Astro Compass system is bewilderingly complicated, consisting of 19 components (above) to support the Astro Tracker.4

On the right are the ten amplifier and computer components that controlled the system;

the Angle Computer is in the lower right.

On the left are the nine control and indicator panels that were used by the B-52's navigator.

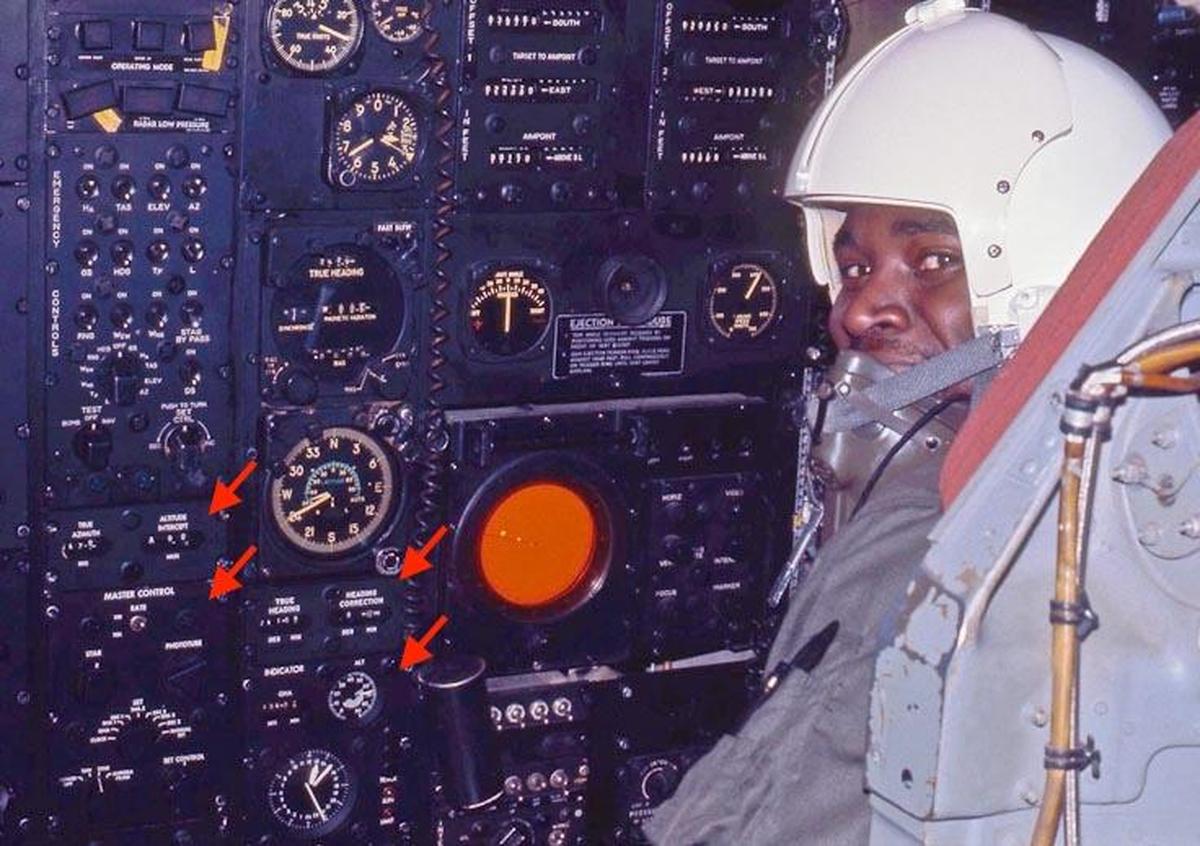

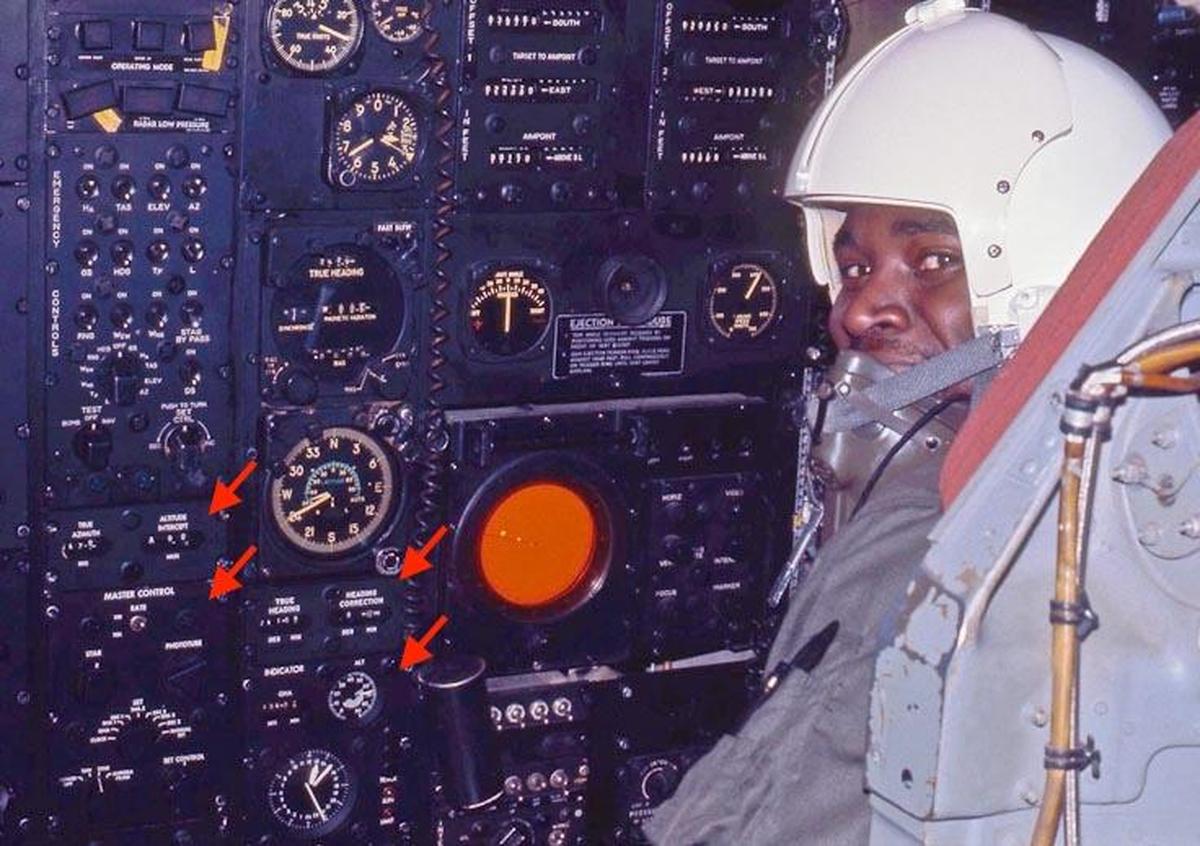

The photo below shows four of these panels in use in a B-52 in 1972.

The navigator's station in a B-52. Some of the Astro Compass controls are indicated with arrows: the Line of Position display and the Master Control on the left, and the Heading display and Indicator display to the right. The navigator in this photo is

Carl Hanson-Carnethon. From

Rob Bogash's B-52 photo album. This specific B-52 (#2584) is now at

The Museum of Flight, Seattle, but the Astro Compass is no longer present.

Controlling the Astro Compass

The Astro Compass has an interesting user interface, letting you input one value at a time by rotating a knob.

First, you use the

Master Control Panel to select a data value such as the clock time, SHA (Sidereal Hour Angle) for star #1, or

Declination for star #3.

Then you turn the "Set Control" knob clockwise or counterclockwise to scroll through the data values

until the proper value is reached.

Each knob on the Master Control Panel has a different geometrical shape, allowing the user

to distinguish the knobs by feel.

The Master Control Panel is visible in the lower left corner of the photo above, within easy reach of the navigator.

The Master Control Panel is the main interface to the Astro Compass.

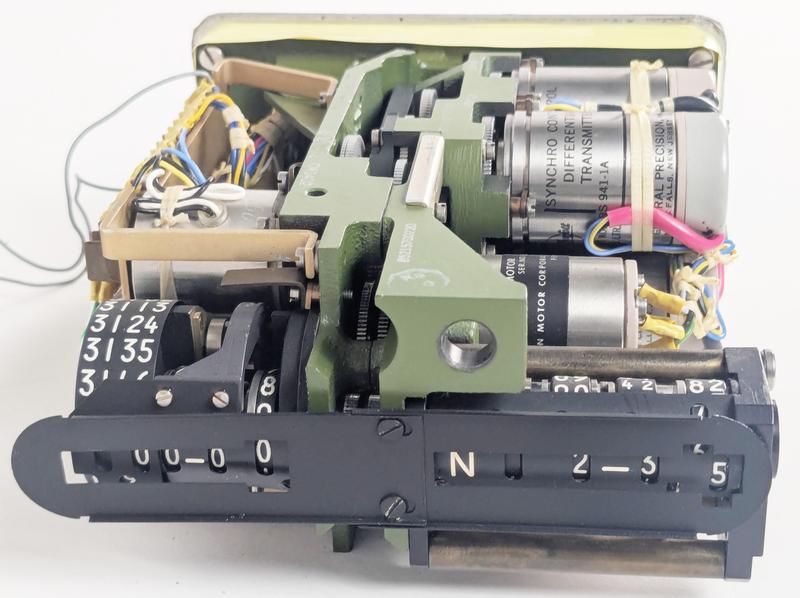

Each data value has a separate electromechanical display.

The photo below shows a Star Data display, indicating the sidereal hour angle and the declination

for a star.

I removed the cover so you can see how the digital display actually consists of analog dials rotated by motors

under synchro control.

The system has three Star Data displays, so it can

hold the positions of three stars at a time.

Getting fixes from three different stars is

useful when computing lines of position. The system uses one star at a time, but you can quickly change stars by flipping the Star switch on the Master Control Panel.

A Star Data display with the cover removed.

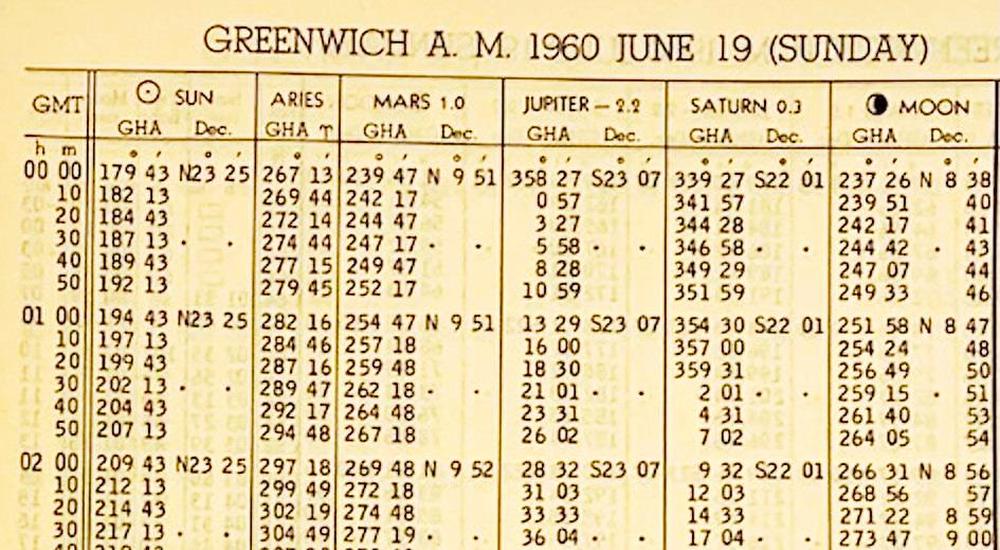

But how did the navigator obtain the information to put into the Astro Compass, since the sun, moon, stars, and planets are in constant motion?5

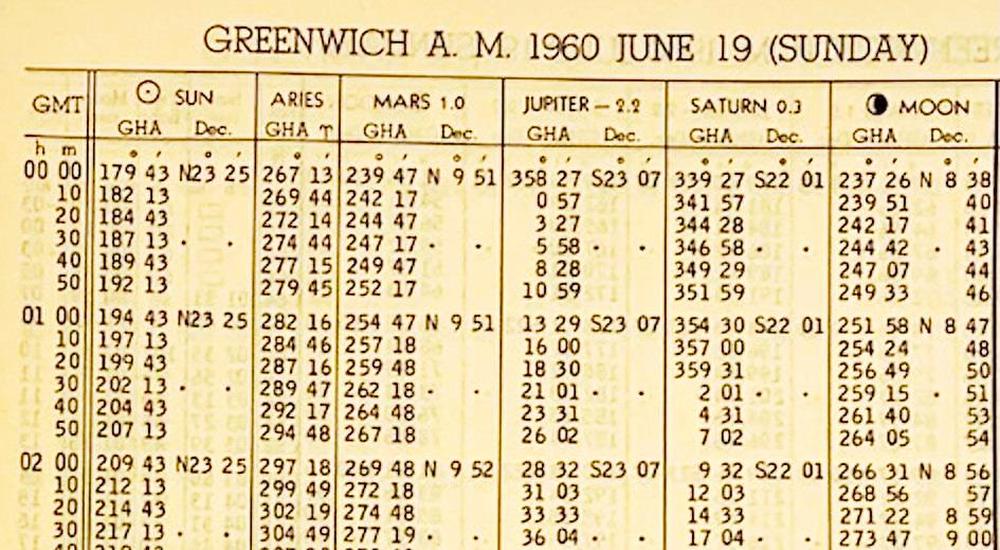

The necessary celestial information is published in a book called

the Air Almanac.

The US Government started publishing the Air Almanac in 1941, issuing a new volume every four months.

The Almanac had a sheet for each day, providing celestial data on 10-minute intervals.

The first column has the time

(GMT, Greenwich Mean Time)6 while the other columns give the position of the sun, an important value

called the First Point of Aries (symbol ♈︎), the positions of the visible planets,

and the position of the moon.

A separate table and chart provided the locations of stars; the stars don't have daily

updates since they are almost stationary.7

(The Air Almanac is now online; you can download the 2026 Air Almanac here.)

An excerpt from the 1960 Air Almanac. Photo used with permission from

tanasa2022, who is selling the Almanac on

eBay.

The navigational triangle: Computing a star's position

The Air Almanac provides star coordinates in a global coordinate system, but the Astro Compass needed to

know star coordinates in the aircraft's local coordinate system.

Determining the star's position requires changing the coordinate system by using

spherical trigonometry and something called the navigational triangle.

There's a fair bit of terminology involved, which I'll explain in this section.

The Astro Tracker, like many telescopes, is aimed by using azimuth and altitude.

Suppose you go into your yard, point at the horizon, and turn 360° in a circle; the direction

you're pointing is called the azimuth.

The point directly overhead is called the zenith.

Now swing your arm upwards 90° from the horizon to the zenith. That angle is

called the altitude.

(Confusingly, the term "altitude" is used both for the angle of a star and the height of an aircraft.)

Thus, if you point at a particular star, you can describe its position with two angles:

your horizontal rotation from north gives the azimuth, and the

angle up from the horizon gives the altitude.8

This system is called the horizontal coordinate system, as it is based on the horizon.

(The word "horizontal" comes from "horizon", by the way.)

This is a local coordinate system since

other locations will have a different azimuth and altitude for the star.

The azimuth and altitude constantly vary with time because the Earth's rotation makes the star appear to move.

The equations for the altitude and azimuth are complicated, with sines, cosines, arcsine,

and arctangent.

To see why the equations are complicated, consider a time-exposure photo of star trails.

As the Earth rotates, each star forms a circle around Polaris, the North Star.

To trace out this circular path, the altitude and azimuth vary in a trigonometric way.

This computation is performed electromechanically by the Angle Computer, as will be explained later.

Now let's switch to how the position of a star is defined in the Air Almanac (for example),

independently of your local position.

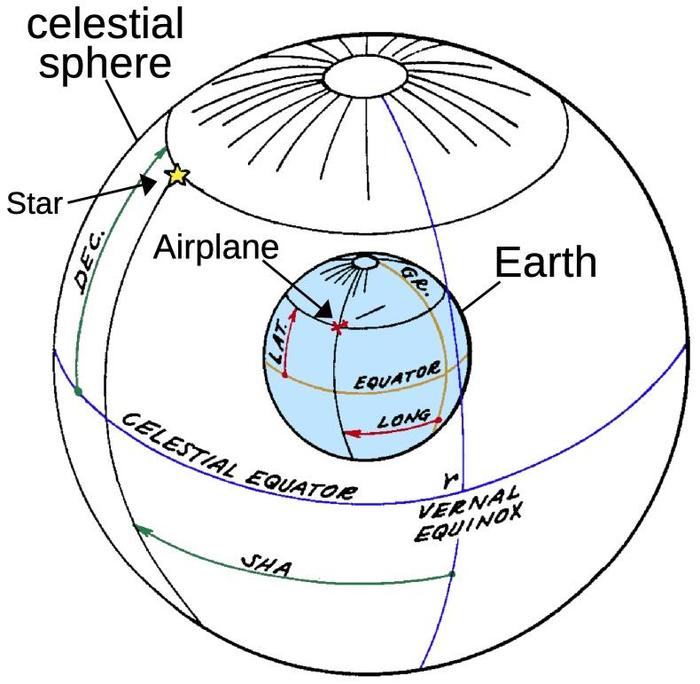

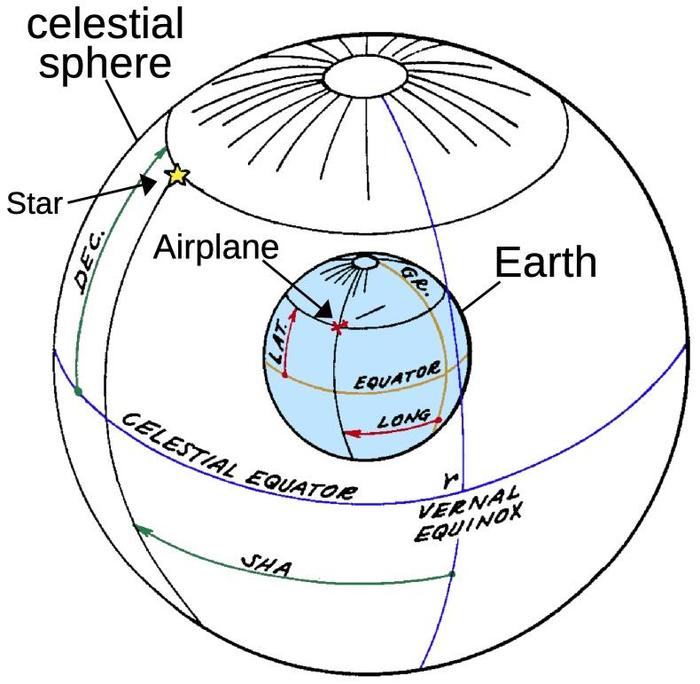

Pretend that the stars are on the surface of a large sphere that surrounds the Earth, called

the celestial sphere.

The stars are stationary on the surface of the celestial sphere, while the Earth rotates once

a (sidereal)9 day in the middle. Thus, as you look up at the celestial sphere, you see the stars moving.

You can extend the Earth's equator out to the celestial sphere, defining the celestial equator.

Likewise, the celestial sphere has celestial poles, matching the Earth's poles.

On the Earth, you specify a location (such as the airplane's location) with latitude and longitude (red).

Latitude is measured from the equator, and longitude is measured from a fixed meridian (orange).

The 0° meridian is arbitrarily defined to pass through Greenwich (England, not Connecticut).

Similarly, the position of a star is specified by the angle from the celestial equator (called declination instead of latitude) and the angle from the meridian (called the sidereal hour angle or SHA instead of longitude).10

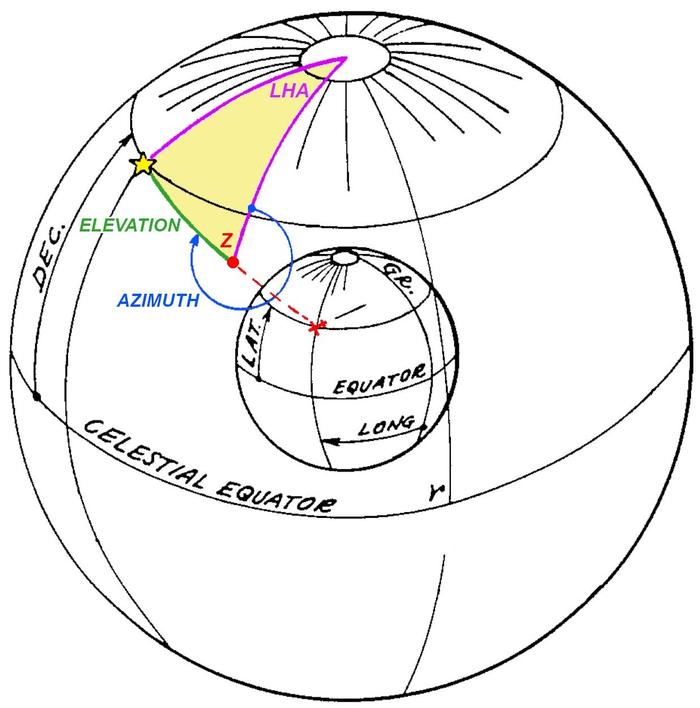

The celestial sphere, with the Earth at the center. The position of a star is described by Sidereal Hour Angle and declination, analogous to longitude and latitude describing the position of, say, an airplane on the Earth. The diagram is based on

patent 2998529, "Automatic astrocompass".

But what meridian is the starting point—0°—when measuring a star's Sidereal Hour Angle?

The celestial equator matches the Earth's equator, but this won't work for the Greenwich meridian

because it is constantly in motion.

Instead, the 0° celestial meridian is arbitrarily defined as the position where the sun crosses the equator

at the vernal equinox (the start of spring).

If you consider the position of the sun on the celestial sphere, the sun will travel around the

sphere once a year. Because the Earth's axis is tilted, the sun will be above the equator

half the year and below the equator half the year, crossing the equator at the vernal equinox (March)

and the autumnal equinox (September).

This reference point on the celestial sphere is called the First Point of Aries, represented by the symbol

♈︎ (horns of a ram); you might remember this symbol from the Air Almanac.

At this point, the sun is in the constellation Pisces.

So why is this point called the First Point of Aries and not Pisces?

Back in 130 BCE, the ancient Greek astronomer Hipparchus defined the First Point of Aries as the starting point for the sun's motion.

In that distant era,

the sun was in the constellation Aries at the equinox, not in Pisces as it is today.

It turns out that the direction of the Earth's axis isn't fixed, but moves in a 26,000-year

cycle called the precession of the equinoxes.11

A 26,000-year cycle may seem irrelevant, but it's fast enough that the sun has moved from Aries

to Pisces since Hipparchus's time.

(And the equinox has moved 1° more since the B-52 was first produced!)

(All this talk of Aries and Pisces may sound like astrology, and, yes, there is a direct connection.

Aries is the first zodiac sign, starting at the vernal equinox, typically March 21. The equinox's precession is "backwards", so

the equinox has moved to Pisces, the last zodiac sign.

Astronomically, the equinox will move into the constellation Aquarius around 2600 CE, but

astrologers disagree on whether the Age of Aquarius has started;

perhaps the 1960s was the dawning of the Age of Aquarius.)

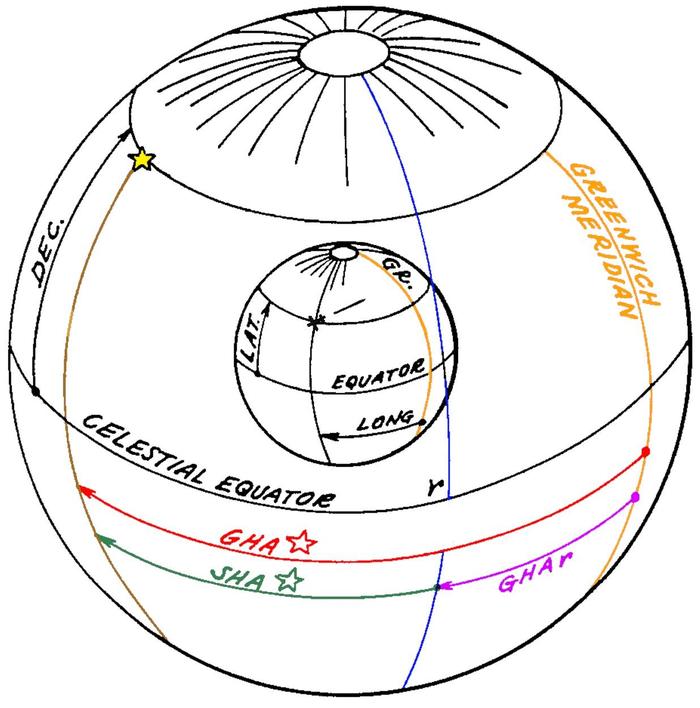

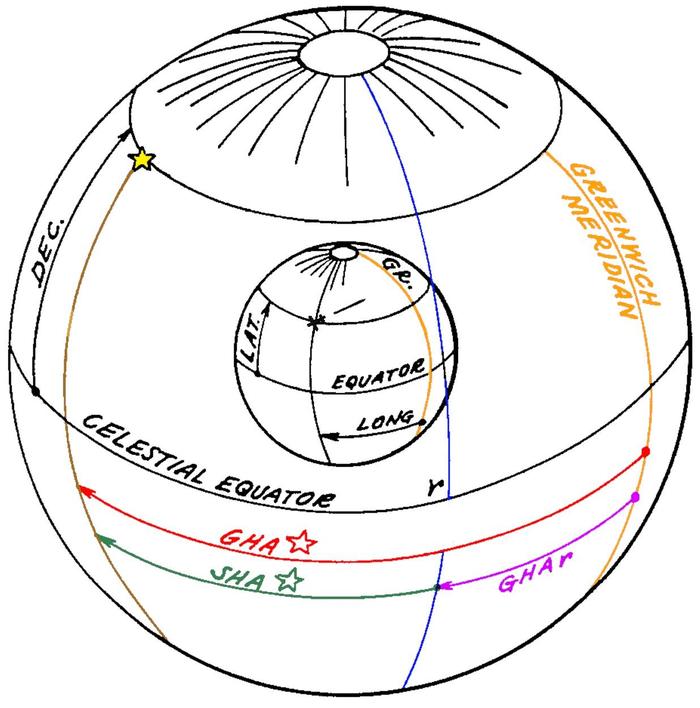

How do you convert the star's fixed coordinate to the Earth's rotating coordinate?

First, you look up the angle between the Greenwich meridian and the celestial meridian of Aries at a

particular time.

This angle (purple) is called the Greenwich Hour Angle of Aries (GHA ♈︎).

Next, you look up the star's Sidereal Hour Angle (SHA). Adding them gives you the

star's Greenwich Hour Angle (red), the angle between the Greenwich meridian and the star.

Subtracting the aircraft's longitude gives you the Local Hour Angle (LHA, not shown), the angle between

the aircraft's meridian and the star.

(Note that these steps are simply addition and subtraction, so a mechanical system can easily do

them with differential gears.)

Computing the Greenwich Hour Angle of the start on the sphere.

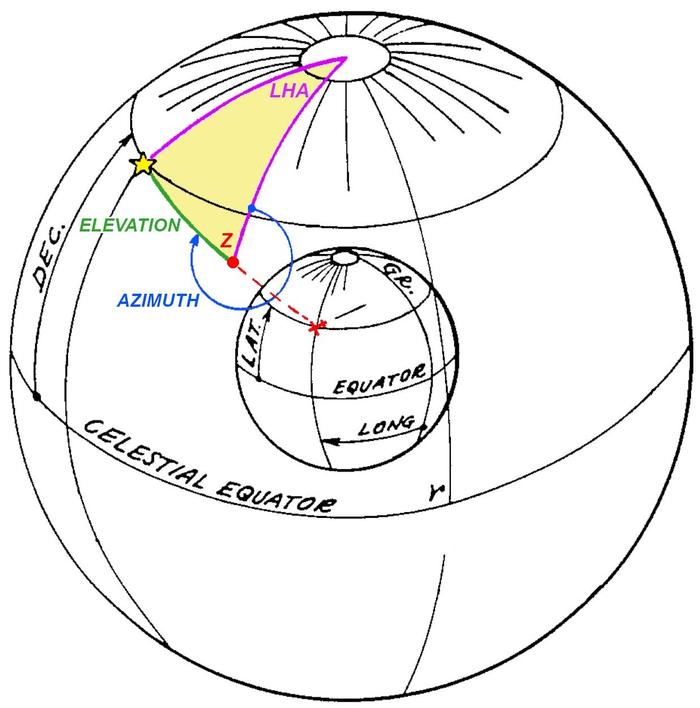

The final step, obtaining the azimuth and altitude, requires tricky spherical trigonometry.

The yellow triangle is the navigational triangle, a spherical triangle on the surface of the celestial sphere.

The upper vertex is the North Pole, the red vertex is the airplane's zenith (i.e., directly above the airplane), and the final vertex is the star.

Two sides of the triangle and an angle (purple) are known, so the remaining angles and sides can be

solved with spherical trigonometry.

Specifically, the first side (purple) is 90°-declination, the second side is 90°-latitude,12

and the angle between is the LHA (Local Hour Angle).

Solving for the angle at the zenith gives the azimuth (blue), while solving for the third side gives 90°-altitude (green, the angle down from the zenith to the star).

By solving the navigational triangle, the altitude and azimuth can be obtained.

Thus, the key problem is solving the navigational triangle.

Navigators could solve the navigational triangle by looking up angles in a thick book of "sight reduction" tables and performing some math.

But how could the process be automated? That was

the purpose of the Angle Computer.

The Angle Computer

The job of the Angle Computer was to solve the navigational triangle mechanically.

Its inputs were the star's declination, altitude, and local hour angle.

From these, it computed the star's altitude and azimuth at the aircraft's current position.13

The concept behind the Angle Computer is that it physically modeled the celestial sphere with a half-sphere,

2 5/8" in radius.

A star pointer was mechanically positioned on the surface of this sphere, using the star's declination and local

hour angle, adjusted by the latitude of the viewer.

The star pointer moved a readout mechanism that translated the star's position into the azimuth and

altitude at the specified location.

Thus, the Angle Computer mechanically converted between the coordinate systems by using a physical

representation, solving the navigational triangle.

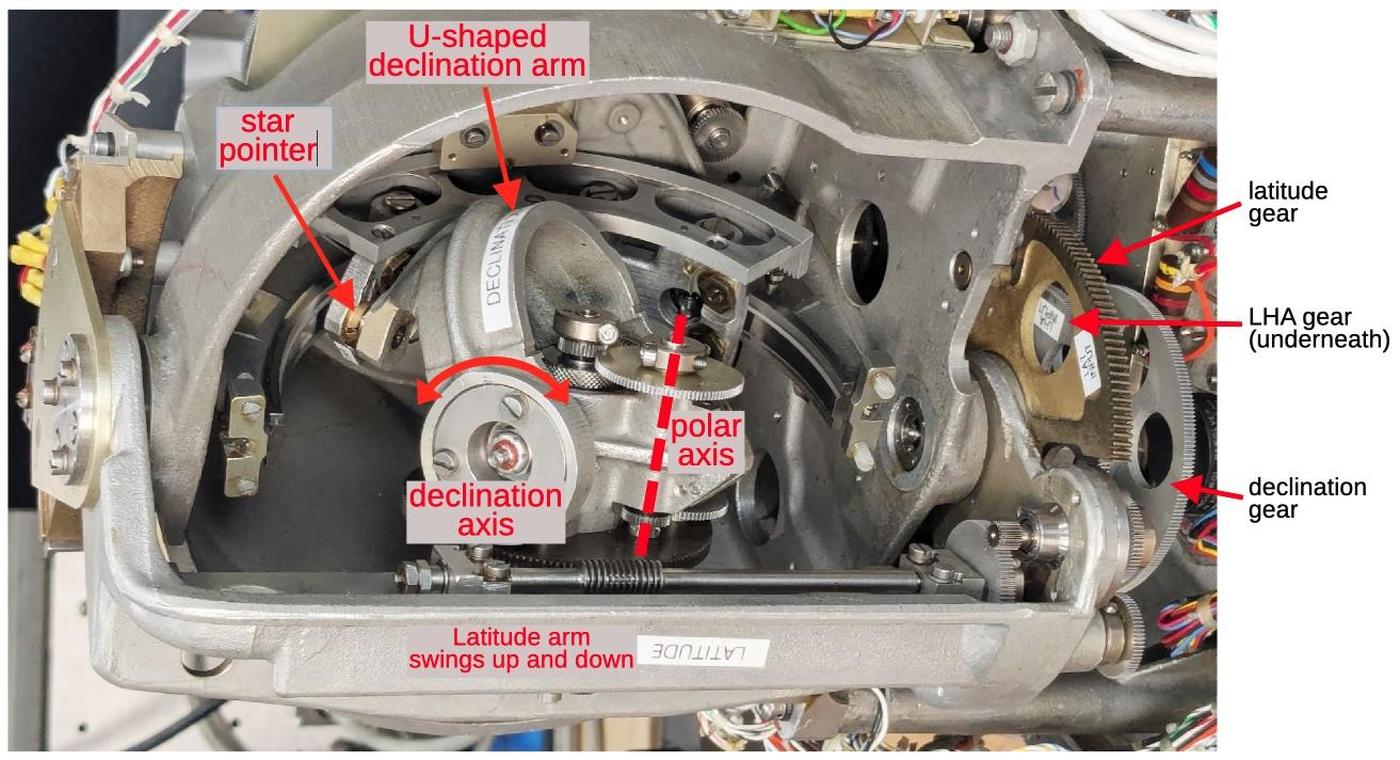

The diagram below shows how the star pointer is positioned on the two-dimensional surface of the sphere,

using a complicated mechanism inside the sphere.

The U-shaped declination arm swings up and down, corresponding to the star's declination (angle above

the celestial equator).

Meanwhile, the declination arm constantly rotates around the polar axis, as specified by the LHA (Local Hour Angle).

In one (sidereal) day, the mechanism will make a full cycle, corresponding to the Earth's spin.

Finally, the latitude arm moves the mechanism up or down, corresponding to the viewer's latitude.

On the right, three gears provide the inputs for latitude, LHA, and declination.

The input mechanism for the Angle Computer. The photo has been rotated 90° to better match the

Earth's rotation. Rotation around the polar axis corresponds to the Earth's daily rotation. Note that the star pointer will hit the end of the semicircular azimuth arc at some point; this corresponds to the star moving to the horizon and setting.

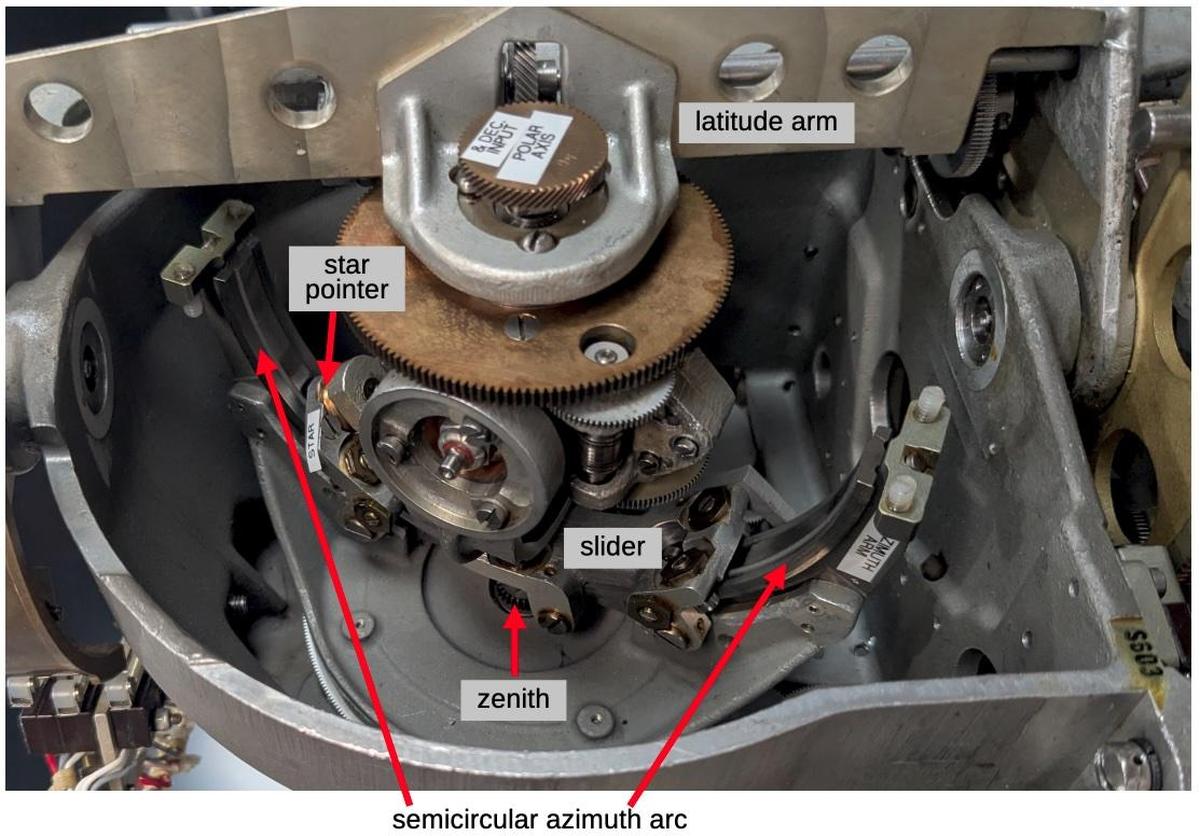

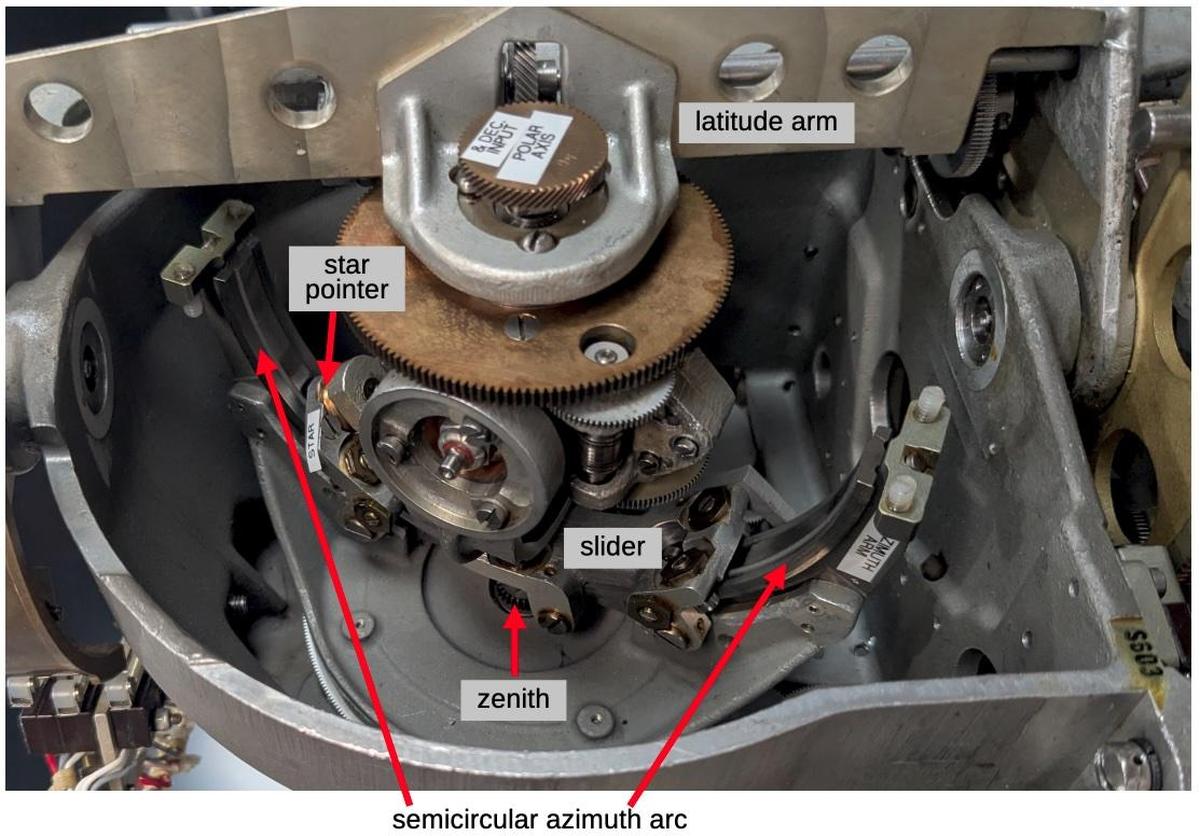

A separate mechanism provides the altitude and azimuth outputs, driven by the star pointer.

The key is the semicircular azimuth arc, which represents the arc from the viewer's horizon to

the zenith, oriented to a particular azimuth.

The star pointer is attached to the azimuth arc through a slider, so as the star pointer moves,

it moves the slider along the azimuth arc and also rotates the azimuth arc.

Specifically, the azimuth arc represents the line from the horizon to the zenith at a particular azimuth.

The position of the

slider on the azimuth arc corresponds to the altitude, from 0° at the horizon to 90° at the zenith.14.

The azimuth arc rotates around the zenith point, which is at the back of the azimuth arc; this rotation

indicates the azimuth value.

As the azimuth arc rotates, it turns a gear at the zenith, providing the azimuth output.

The slider arc has teeth on it; as the slider moves, these teeth rotate a second gear, providing the altitude output.

The output mechanism for the Angle Computer. The mechanism is in a different position from the

previous diagram. In particular, the latitude arm has been raised to a near-polar latitude and the photograph is from

the other side of the latitude arm. At this latitude, the polar axis is almost lined up with the zenith. As the LHA changes, the star will move in a circle, rotating the azimuth arc but causing little change in altitude. This corresponds to the real world situation of stars moving in a cirle around the zenith, if you're near the pole.

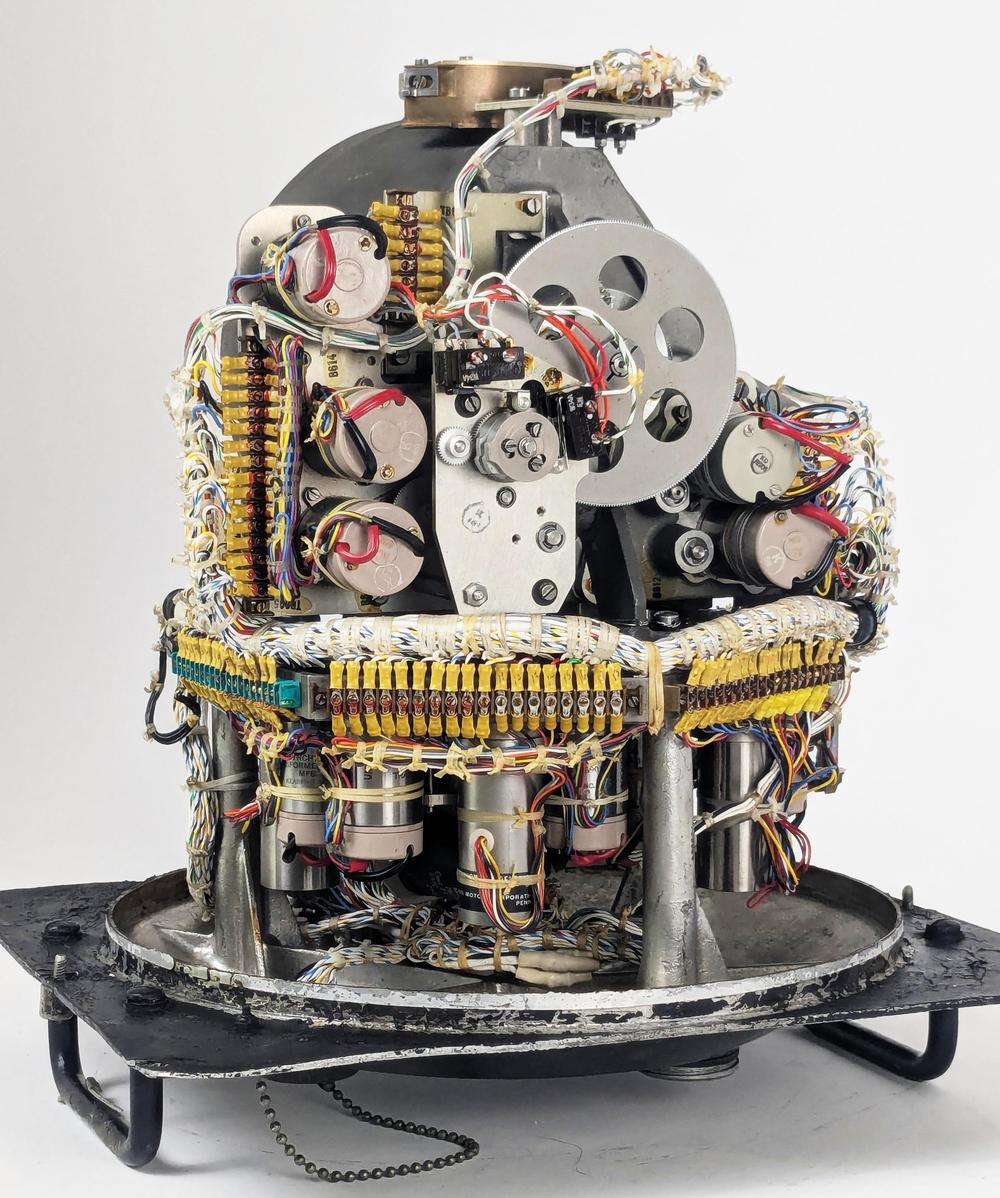

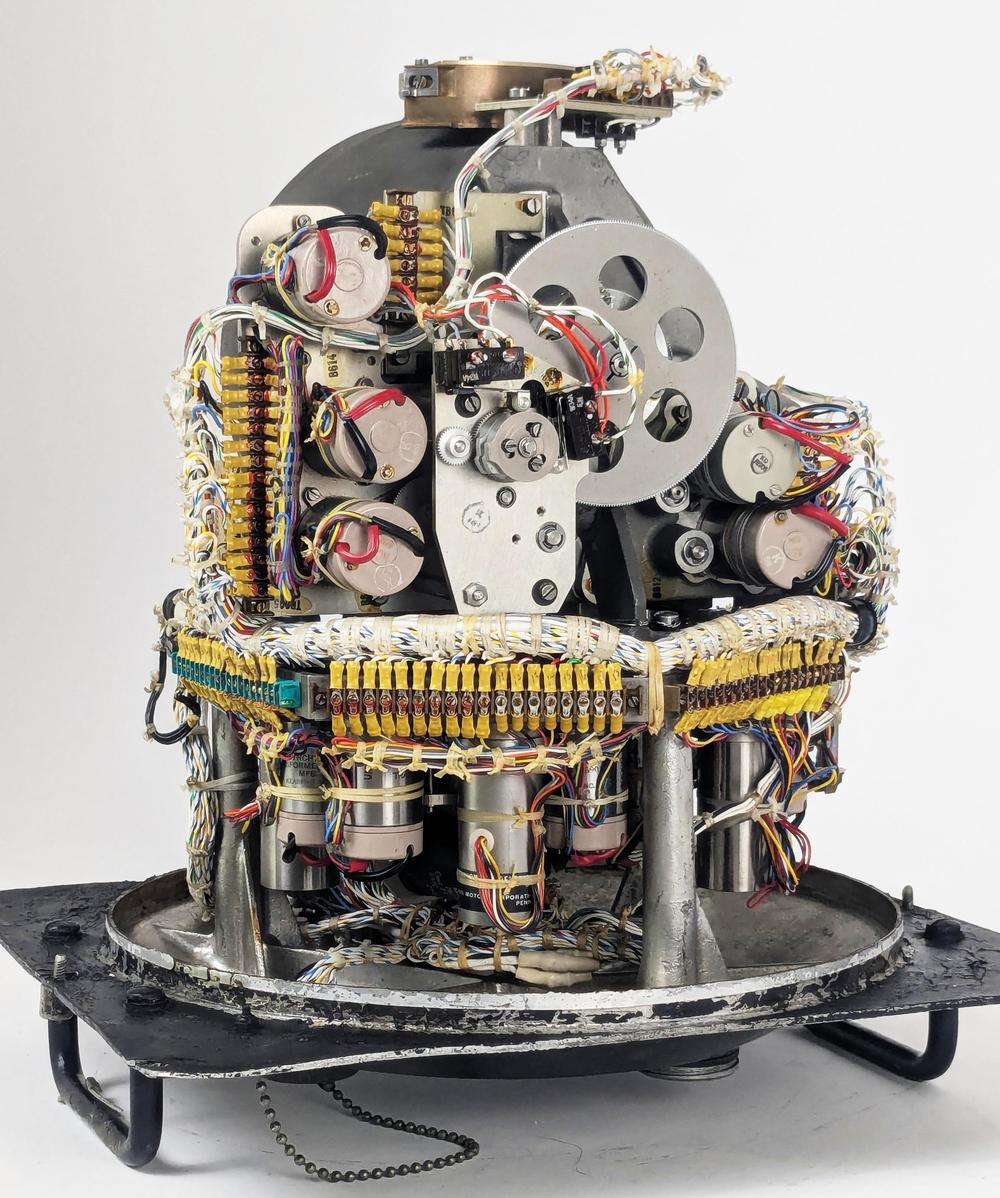

From the back, the numerous synchro transmitters, synchro control transformers, and motors are visible.

Even though the computation itself is mechanical, the Angle Computer has numerous electrical components.

In the top half,

the synchro transmitters provide electrical outputs of the azimuth and altitude. (A synchro transmitter

uses fixed and moving coils to convert a shaft rotation angle into a three-wire electrical signal.)

The large gear provides the altitude output.

In the lower half, the longer cylinders are motors that move the Angle Computer's mechanisms.

The motors are directed to rotate to a particular position through a feedback loop:

synchro control transformers provide feedback to the external servo amplifiers that power the motors.

The back of the Angle Computer.

Partially disassembling the Angle Computer shows the complex gear trains inside, linking the

synchros, motors, and the physical mechanism.

The squat brass-colored units in the lower center are differential assemblies to add or subtract signals.15

One of the drive motors, a long cylinder, is visible in the lower right.

Gear trains inside the Angle Computer.

The Line of Position

Although the heading was the primary output from the Astro Compass,

the Astro Compass could also help determine the location of the aircraft, using a technique called

the celestial line of position.

This technique was discovered in 1837 and became heavily used for navigating ships with a sextant.

It could also be used onboard an aircraft.

To understand the line of position, suppose you go outside and find a star directly overhead.

If you measure the altitude—the angle from the horizon to the star—with a sextant, the angle will be 90°, since it is overhead.

Now, suppose you teleport 60 nautical miles away in any direction.

The sextant will now show an altitude of 89° to the star, since a nautical mile is conveniently defined to match

one minute of angle (one-sixtieth of a degree).

Alternatively, if you measure an altitude of 89° to the star, you know you are 60 miles away from the original

point under the star (called the sub-stellar point).

Likewise, if you measure 88° to the star, you're on a circle with radius of 120 nautical miles around the sub-stellar point.

If you measure, say, an altitude of 40°, you know you're on a very large circle with radius of 3000 miles around the sub-stellar point.

So how does this help with navigation?

Suppose you're on a boat in the middle of the Pacific and you have a rough idea of where you are, say within 100 miles, but you want to find your exact position.

Put a dot on the map where you think you are.

Next, pick a star and work out what the angle to the star should be from your position.

Measure the altitude with your sextant.

Suppose you expected 50° but measured 51°. You now know that you're somewhere on a circle with radius of

2340 miles around the distant sub-stellar point. This doesn't seem very useful.

However, since the angle was 1° more than expected, you know that the circle is 60 miles closer to that

distant point than your estimated position.

Moreover, since you have some idea of where you are, you know that you're on the part of this

circle near your estimated location.

And since you're looking at a small part of a big circle, you can approximate it by a line.

So you can go back to your map, move 60 miles closer to the star from your estimated point,

and draw a perpendicular line.

This is your line of position, and you know that you're on this line (more or less).

Knowing that you're on a line isn't too useful, but you can repeat the process with a

star in a different part of the sky.

Maybe this time the angle is 2° smaller than expected, so you can draw a line of position

120 miles further away from your estimated position, in a different direction.

The two lines cross, indicating a position where you (probably) are.16

Normally, you repeat the process with a third star, giving you three lines of position,

providing a position and an idea of its accuracy.

The Astro Compass used the display above to show the

star's azimuth and the distance in miles from the assumed location to the line of

position, called the Altitude Intercept.

With this information, the navigator could draw a line of position on the map.

The navigator repeated the process with two more stars to get a location fix.17

Conclusion

The Angle Computer is a relic from a time when a mechanical analog computer was the best way to solve a

problem, but the computer was also electrical.

Although a mechanical apparatus solved the navigational triangle, it was moved into position by motors, and

the output was transmitted electrically through wires.

Moreover, the Angle Computer was driven by electronic amplifiers and feedback circuits that used both vacuum

tubes and transistors.

The designers of the Astro Compass considered multiple approaches to computing the navigational triangle (details).

The first was to use small electromechanical devices called resolvers that convert a physical rotation into sine and cosine values.

By combining six resolvers with amplifiers, the altitude and azimuth could be obtained.

The resolver solution was rejected as being too large and requiring a precision power supply.

The second approach was to use a digital computer to determine the solution.

This solution was rejected because in 1963, a digital computer was expensive, slow, and less reliable.

The final approach, which was adopted, was to build a mechanical, physical model of the celestial sphere.

Thus, the Angle Computer resided at the uneasy intersection of physical mechanisms, electrical circuits, vacuum

tubes, and solid-state electronics, soon to be obsoleted by digital computers.

I plan to write more about the Astro Compass system. For updates, follow me on

Bluesky (@righto.com),

Mastodon (@kenshirriff@oldbytes.space),

or RSS.

Thanks to Richard for supplying the Astro Compass hardware.

AI statement: I didn't use AI to write this article (details).

Notes and references

-

The Angle computer is labeled "Computer, Altitude-Azimuth, Automatic Astro Compass Type MD-1" and also

has an "MD-3" sticker. Presumably, MD-3 is an upgrade of the MD-1.

The system is also known as the "Kollsman KS-50-03 Astro Tracking System" (or maybe 50-08).

There are a few documents available on the system, including

Operating Instructions Handbook,

Operating Instructions Pocket Manual,

a technical article The Celestial Tracker as an Astro Compass,

and a patent Celestial Data Computer.

The web page PRC68: Automatic Astro Compass Type MD-1 has

an extensive collection of links.

CuriousMarc has a YouTube series on the Astro Tracker, starting with part 1.

If you want to learn more about celestial navigation, this World War II training film describes the process in detail. ↩

-

From the outside, the Angle Computer is an uninteresting black cylinder with connectors on the end.

The cylinder was sealed with a soldered metal band that we removed with a blowtorch.

It was pressurized with dry nitrogen through the fill valve in the center, a Schrader valve just

like you'd find on a tire.

The Angle Computer is packaged in a nondescript black cylinder.

↩

-

The Astro Compass needed to know approximately where in the sky to find the star, in order to

point its sensor in the right direction.

The direction didn't need to be exact because the Astro Compass performed a spiral search pattern

to find the star.

This search pattern covered ±4° in bearing and ±2.5° in altitude.

In comparison, the Moon is 0.5° wide, so it's a fairly large target area. ↩

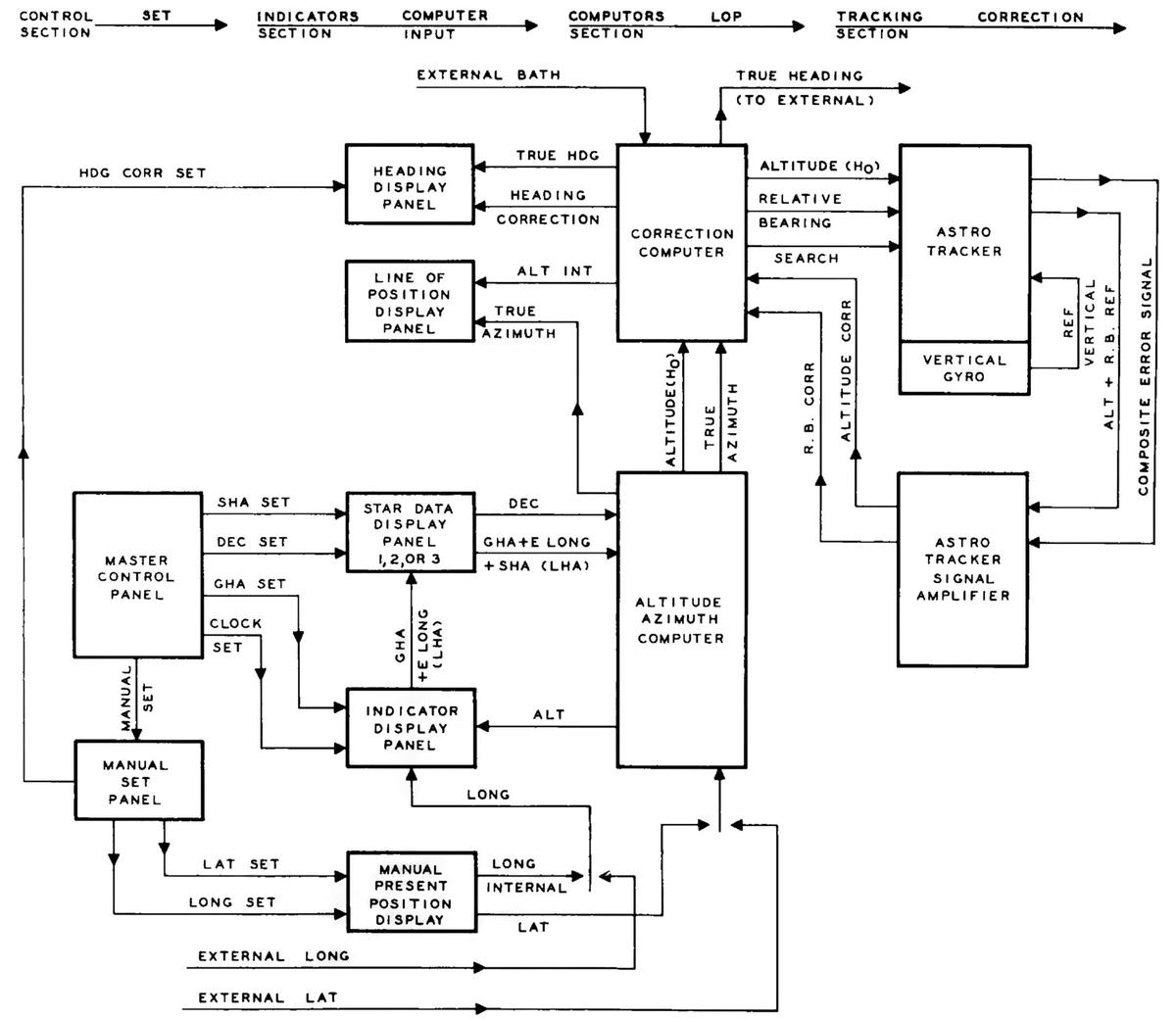

-

The diagram below shows the physical connections of the components of the Astro Compass.

A physical diagram of the Astro Compass. The Angle Computer is called the Alt Az Computer in this diagram. Click this image (or any other) for a larger version.

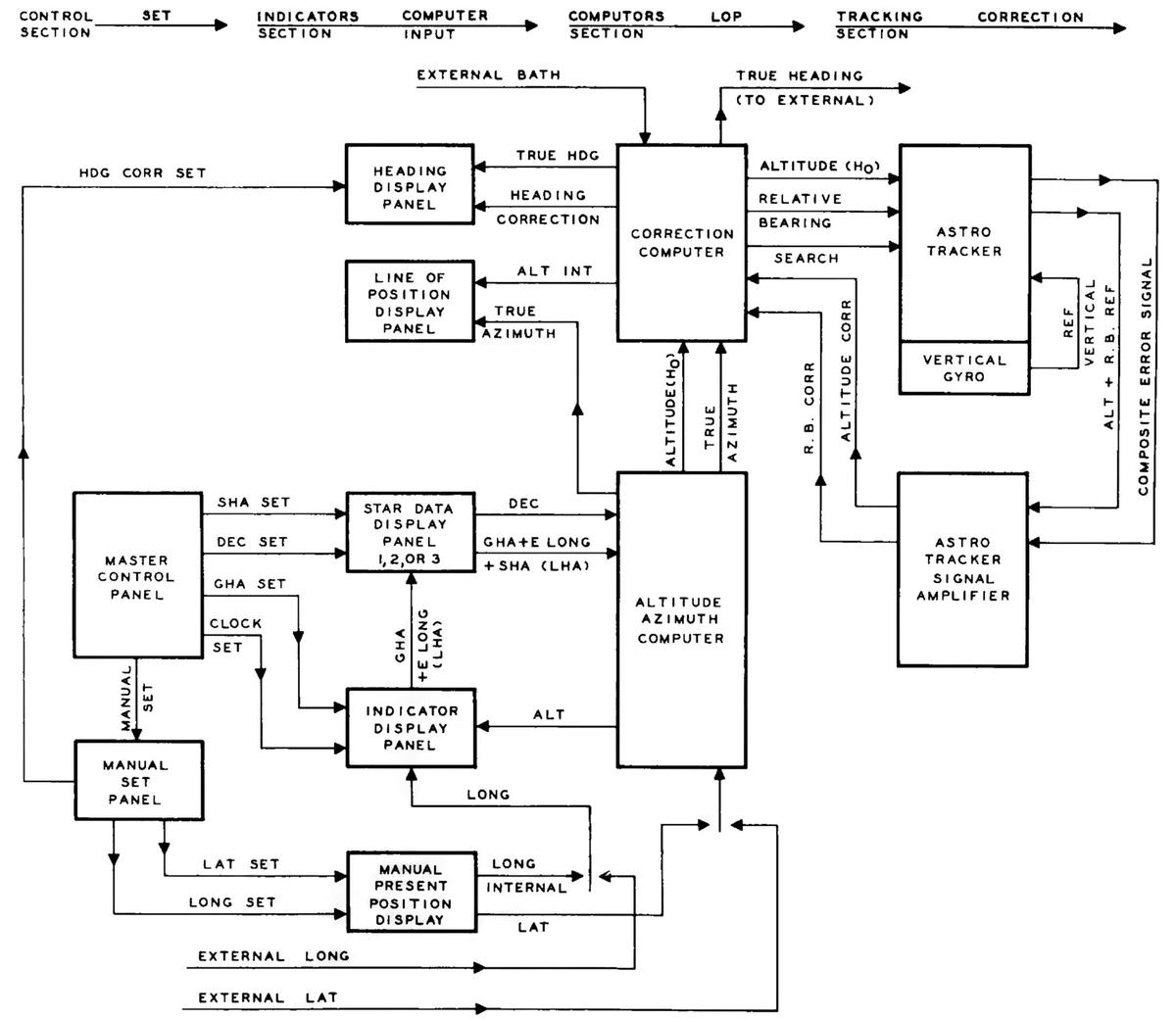

For a slightly different perspective, the diagram below shows the flow of data in the Astro Compass.

↩

-

The Astro Compass

normally gets the latitude and longitude from the bombing computer.

It normally gets the approximate heading (called the BATH, Best Available True Heading)

from the magnetic compass.

These values can all be entered manually if necessary. ↩

-

Greenwich Mean Time is now mostly obsolete, replaced by UTC (Coordinated Universal Time).

Greenwich Mean Time is based on when the sun reaches its highest point over Greenwich, England (longitude 0°).

In solar time, the sun reaches its highest point at

exactly noon.

Unfortunately, the Earth's orbit is elliptical, so the length of a solar day varies throughout the year, by almost a minute.

Since it's nice to have a constant 24-hour day, Mean Time was introduced. The idea is to average

out the length of the day throughout the year, so each day is exactly 24 hours, even though the sun

is no longer overhead exactly at noon.

UTC is essentially the same as GMT, but defined by atomic clocks rather than the position of the sun over Greenwich.

They can vary by up to 0.9 seconds, with a leap second added to UTC to keep them in sync. ↩

-

The stars are all moving in different directions, but for most stars, the visible change

in position (the proper motion) is very small.

However, comparing the 1960 Air Almanac with the 2026 Air Almanac shows many of the listed

stars have moved a degree or more

due to the precession of the equinox.

The change varies from star to star, both because the angular change depends on the star's

location and because the SHA is exaggerated as you get closer to the poles

(details). ↩

-

Note that the azimuth is discontinuous at the zenith.

To see this, imagine a star passing directly overhead: point your arm at the horizon and then swing it up until it is pointing straight up.

To continue, you need to instantaneously spin around 180° and then lower your arm.

The discontinuity in azimuth is important for the Angle Tracker, since it can't instantaneously change the azimuth by 180°.

To avoid this problem, the Angle Computer has cams and microswitches to keep

the altitude below 85°. (Otherwise, the azimuth arc will jam up instead of rotating smoothly.)

The Astro Tracker also has declination limits of +90° and -47° and a lower altitude limit of -6°.

The latitude is limited to the range between -2° and +90°; the system automatically switches

hemispheres so both the North and South latitudes are usable. ↩

-

One annoyance is that the length of a day is slightly different if you look at the sun (a solar day) versus looking at the stars (a sidereal day).

A solar day is the standard 24-hour day, where the Earth rotates once and the sun returns to its previous position (approximately).

But if you look at the stars, it takes a bit less time (23 hours, 56 minutes, and 4 seconds) for

the stars to return to their previous position.

The problem is that during one year, the Earth swings from one side of the sun to the other side and then back to the first side.

From the perspective of the stars, this is an "extra" revolution, so there are 366.25 sidereal days

in a year, compared to 365.25 solar days in a year. (I.e., it's an "off-by-one" error.)

This makes each sidereal day slightly shorter.

You can also think of this as the sun moving around the celestial sphere once per year, with the

sun's position against the stars constantly changing. ↩

-

Celestial navigation usually uses the sidereal hour angle (SHA) to measure the star's position

relative to the meridian.

Astronomers often use the right ascension instead.

The right ascension is measured in the opposite direction and is measured in hours instead of

degrees.

They are related by the formula RA = (360° - SHA) / 15°. ↩

-

The Earth's axis also wobbles on a cycle of 18.6 years because the Earth isn't exactly spherical.

For many purposes, this wobble is averaged out and the "mean equinox" is used.

The physical equinox is called the "apparent equinox".

Greenwich Mean Sidereal Time (GMST) is measured with respect to the mean equinox, while

Greenwich Apparent Sidereal Time (GAST) is measured with respect to the apparent equinox.

The difference between the mean equinox and the apparent equinox is called the

"equation of the equinoxes".

The difference between the two equinoxes is small, less than about 1.1 seconds. ↩

-

The angle of 90°-declination is sometimes called co-declination, the complement of declination,

i.e., the angle down from the pole.

Similarly, 90°-latitude is sometimes called co-latitude.

The triangle can be solved using the spherical law of sines and the spherical law of

cosines.

An alternative, which makes more sense to me, is to find the answer by applying

rotation matrices to change the coordinate system.

Details are here, and Wikipedia has a convenient summary. ↩

-

It may seem like there is a chicken-and-egg situation with navigation since you need to know

your position in order to compute the star's altitude and azimuth, and you need to know the

aircraft's heading to know which direction to point the telescope.

In fact, you just need to know the approximate latitude, longitude, and heading (within 4°), and then

the system generates a more accurate latitude, longitude, and heading. The process can be repeated

until the values converge.

Moreover, the Astro Compass is just one of the instruments that the navigator uses.

The magnetic compass can provide an approximate heading, and dead reckoning or inertial navigation

can provide an approximate location. The Astro Compass can use these to generate more accurate

information, which in turn can improve the accuracy of the dead reckoning or inertial navigation. ↩

-

Since the azimuth arc is a semicircle (180°), it might seem that the star pointer could move 180°

in altitude along the azimuth arc. This wouldn't make sense, since the altitude ranges from 0° (horizon)

to 90° (zenith). The explanation is that the slider is a quarter-circle (90°). Thus, the star

position can only move 90° before the other end of the slider hits the end of the azimuth arc. ↩

-

The differential gears are necessary because the axes aren't mechanically independent.

For instance, as the latitude arm swings up and down, it also moves the declination and LHA drive

shafts, causing unwanted rotation along these axes.

The differentials subtract out the latitude motion from the declination and LHA inputs, so

the resulting movements on each axis are independent. ↩

-

Technically, two different circles on a sphere can cross at 0, 1, or 2 points.

In practice, there will be two intersections, but one intersection is very far away

and can be ignored. ↩

-

Several factors complicated the navigator's job.

By the time the navigator completed a measurement, the aircraft could have moved dozens of miles,

so the navigator needed

to adjust the lines of position based on this movement.

But the navigator didn't know exactly how much the aircraft had moved, due to wind and other factors.

Thus, even with the Astro Compass, the navigator needed to deal with uncertainty, cross-checking between

different measurements to try to get the best results despite constant sources of error. ↩

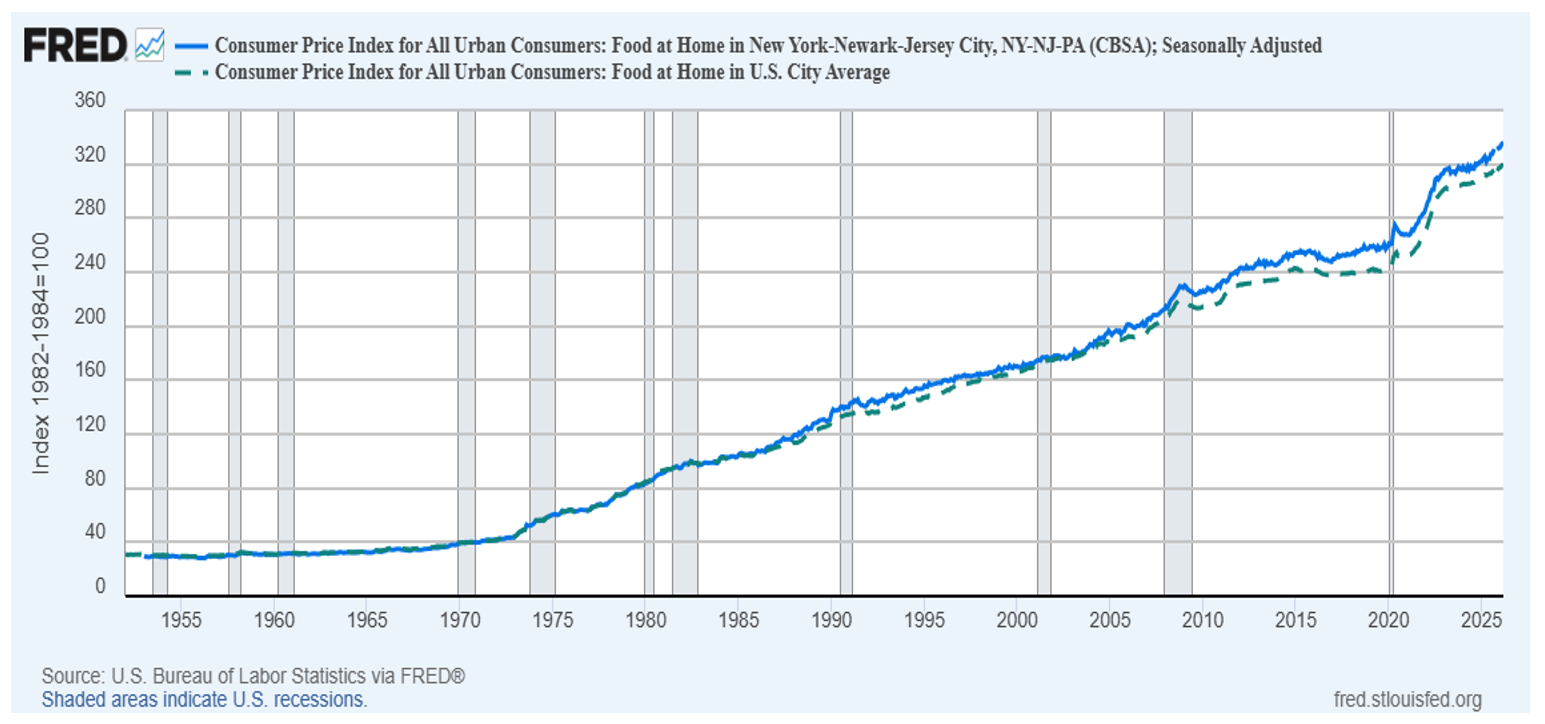

Source: U.S. Bureau of Labor Statistics; Federal Reserve Bank of St. Louis

Source: U.S. Bureau of Labor Statistics; Federal Reserve Bank of St. Louis