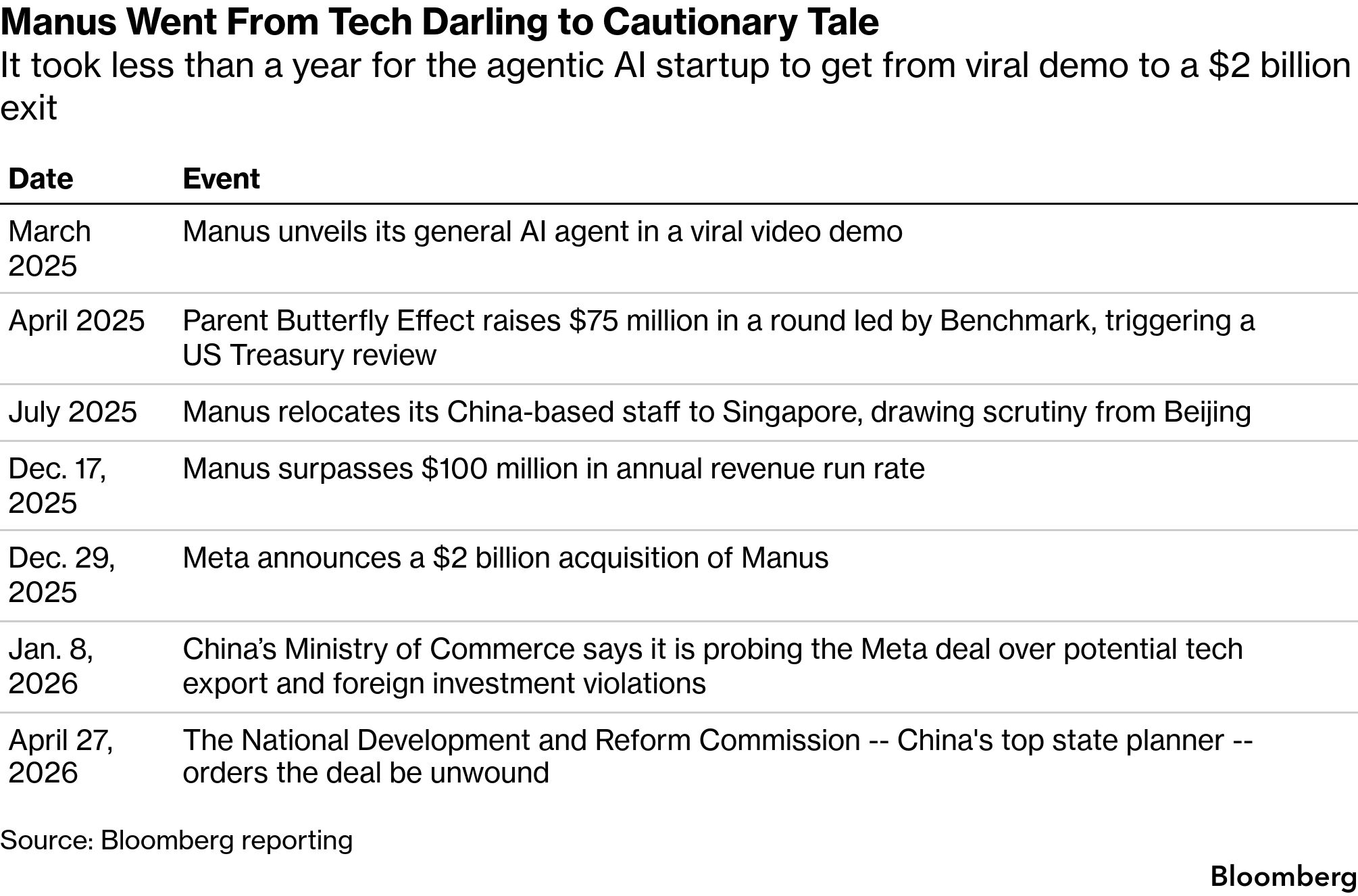

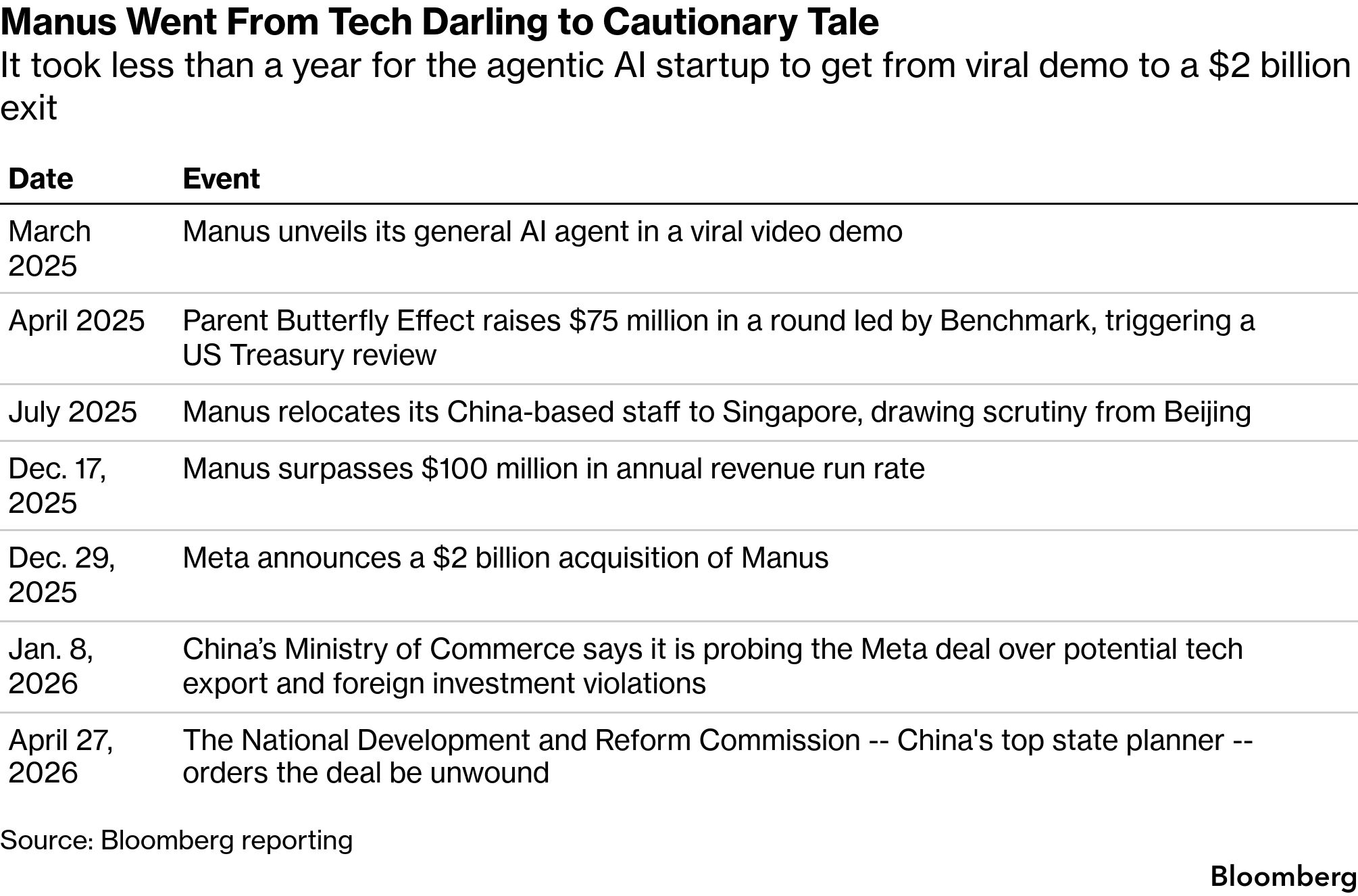

The AI startup Manus, once hailed as a breakthrough that would challenge Silicon Valley’s dominance, is turning into a cautionary tale for Chinese entrepreneurs after Beijing authorities ordered Meta Platforms Inc. to unwind its $2 billion takeover of the company.

With a chilling 54-character decree from the top state planner, Beijing demonstrated its determination to prevent the transfer of sensitive technology to geopolitical foes at all costs. That follows a recent decision to bar major tech firms including ByteDance Ltd. and Moonshot AI from taking American capital without approval, and a clampdown on offshore Chinese companies seeking to list in Hong Kong.

Taken together, the regulatory setbacks usher in an uncertain era for the country’s rapidly expanding AI industry, spurring a raft of activity behind the scenes. Entrepreneurs, financiers and companies are scrambling to avoid becoming another Manus, whose Chinese founders moved their business to Singapore to tap global capital. Firms are reviewing investment portfolios, overhauling ownership structures and even erecting firewalls between Chinese and American units.

“As of today, ‘the Manus Model’ is officially dead,” said Dermot McGrath, founder of ZenGen Labs, a Shanghai-based consultancy that advises tech startups about their business. “Chinese teams have punched well above their weight in AI and produced a string of unicorns, and policymakers saw the Manus maneuver as a template that could threaten the crown jewels of their innovation ecosystem in the defining technology of the decade.”

Beijing was particularly irked by the speed at which Meta wrapped up the transaction as well as the loss of pioneering agentic AI to one of Silicon Valley’s most valuable companies.

The backlash against Manus — which comes weeks before China’s Xi Jinping is due to meet US President Donald Trump — underscores Beijing’s overarching ambition to surpass the US in technological and economic might. Meta declined to comment.

WATCH: Why is China blocking Meta’s Manus acquisition? Source: Bloomberg

Chinese AI aspirants once viewed Manus as a blueprint for global success: a viral outfit created by a trio of local entrepreneurs who made a big splash in the US before the Meta buyout. But even as startups like DeepSeek draw massive interest from domestic investors, the admiration for Manus is quickly souring as founders and venture investors grapple with fundamental resets to their funding and corporate structures following intensifying regulatory pressure.

Startups that dreamed of following MiniMax Group Inc. and Zhipu to Hong Kong listings are now seeking advice from their venture backers to avoid getting stuck in IPO limbo, people familiar with the matter said. At least three investment houses are in discussions with their founders about whether to dismantle their offshore entities: the so-called red-chips once deemed a key step toward a debut.

That’s as regulators recently instructed some AI and robotics firms — including DeepSeek-rival StepFun — to unwind those structures in order to qualify for a listing in the city, they said, asking for anonymity to discuss private matters.

Manus Went From Tech Darling to Cautionary Tale

Source: Bloomberg reporting

GLOBAL REACT: China’s AI Warning on Meta, Manus — Buyers Beware

For firms with operations in both China and the US, the Manus fallout represents an even greater threat. Chinese billionaire Chen Tianqiao, a pioneer of China’s online gaming industry, told Bloomberg News that he’s rolled out protocols to prohibit the cross-border sharing of information or code while minimizing the movement of personnel, data and assets.

Chen Tianqiao Photographer: Poppy Lynch/Bloomberg

“The Manus incident serves as a wake-up call for all cross-border entrepreneurs,” Chen told Bloomberg News after Beijing ordered the Manus deal rolled back. “As regulatory environments across regions become increasingly complex, both founding teams and external partners — including advisors and law firms — must adopt a more cautious and rigorous approach to compliance.”

Smaller outfits see such a difficult path to global expansion that some are considering setting up shop in Singapore or Silicon Valley from day one to obscure their Chinese roots, the people said. To avoid Manus-style regulatory snares, they plan to only hire China-based engineers as a low-cost back office.

Beijing is piling on the pressure just as startups grapple with escalating geopolitical tensions. Washington has long discouraged US investors from seeding Chinese technology, particularly in sensitive areas like AI. In recent months, China-based fund managers who’ve backed leading tech companies have used so-called parallel fund structures for fundraising, Bloomberg News reported.

Read More: China Deepens Review of Meta’s Landmark $2 Billion Manus Buyout

Those vehicles allow US investors to keep exposure to non-sensitive sectors, while opting out of industries they are restricted from putting money into. Manus-backer ZhenFund set up a vehicle to house US investors and another for other backers for its latest fund that seeks to raise about $300 million.

“The process of packaging a company to make it an attractive acquisition target for US buyers has already evolved into a full-fledged industry,” said Jenny Xiao, a San Francisco-based partner at Leonis Capital, which invests in early-stage AI startups. “Manus was the first one to actually pull it off.”

To be sure, breakouts like DeepSeek and Manus have shown investors Chinese AI is something they can’t ignore. DeepSeek has kicked off its first external funding, drawing interest from Tencent Holdings Ltd. and Alibaba Group Holding Ltd. while other AI upstarts like Moonshot are nearing an IPO.

The technology underpinning Manus’s viral AI agent was developed by the startup’s founders while they were living in China. When it was launched in 2025, the agent drew worldwide acclaim for its ability to automate complex tasks, from analyzing stocks to drafting sales pitches.

The following month, its parent Butterfly Effect raised $75 million in a round led by Silicon Valley’s Benchmark, valuing it at $500 million. That investment triggered a probe by the US Treasury over potential violations of restrictions on investments in sensitive technologies. Manus received queries from US officials, including whether Chinese Communist Party cadres visited its offices, and if its AI agent was built atop Chinese foundation models, according to people familiar with the matter. Representatives for Manus and the US Treasury didn’t respond to emailed queries about the investigation.

“China’s reported use of threats and coercive exit bans against Meta and its employees is consistent with longstanding Chinese government interference in normal business transactions,” White House spokesperson Kush Desai said in an emailed statement.

Read More: Benchmark’s Manus Deal Sparks Backlash Over Chinese AI

The scrutiny curtailed Manus’s effort to train a smaller AI model in-house using Chinese open-source offerings. As costs ballooned, it was forced to seek a suitor for a buyout, the people said.

When Manus relocated its China-based staff to Singapore in July, it triggered alarm in Beijing. Officials eventually agreed to the departure on the condition that Manus maintain close ties to the domestic ecosystem, the people said. That understanding may have evaporated with the Meta buyout, one of the people said.

In December, Meta announced its acquisition of Manus — a rare bet on a Chinese-born team — days after the startup said it topped $100 million in annualized revenue. It wasn’t clear at the time whether Beijing would exert its authority on a transaction that technically took place beyond its borders.

“Manus may not be a strategic or critical technology for now,” said Vey-Sern Ling, managing director at Union Bancaire Privee. “But conceivably in future, some Chinese startups will come up with sensitive technologies and this case will serve as a warning to tread carefully.”

The startup’s aggressive mandate pushed it from product launch to Meta deal in less than a year. That cowboy attitude was captured by a poster hanging in its Beijing office before it relocated to Singapore: “Go big or die. There are no other options.”

— Echo Wong, Zheping Huang, Haze Fan

Source: Bloomberg reporting

Source: Bloomberg reporting